February 2012

Artist: Gary Hill. Production: Reilly Donovan. Exhibition:

Active Presence - Action, Object and Public. Venue: Museo MARCO,

Vigo, Galicia (Spain). Curators: Sergio Edelsztein and Kathleen

Forde

installation, interactive, kinect, performance, processing,

projection, sound

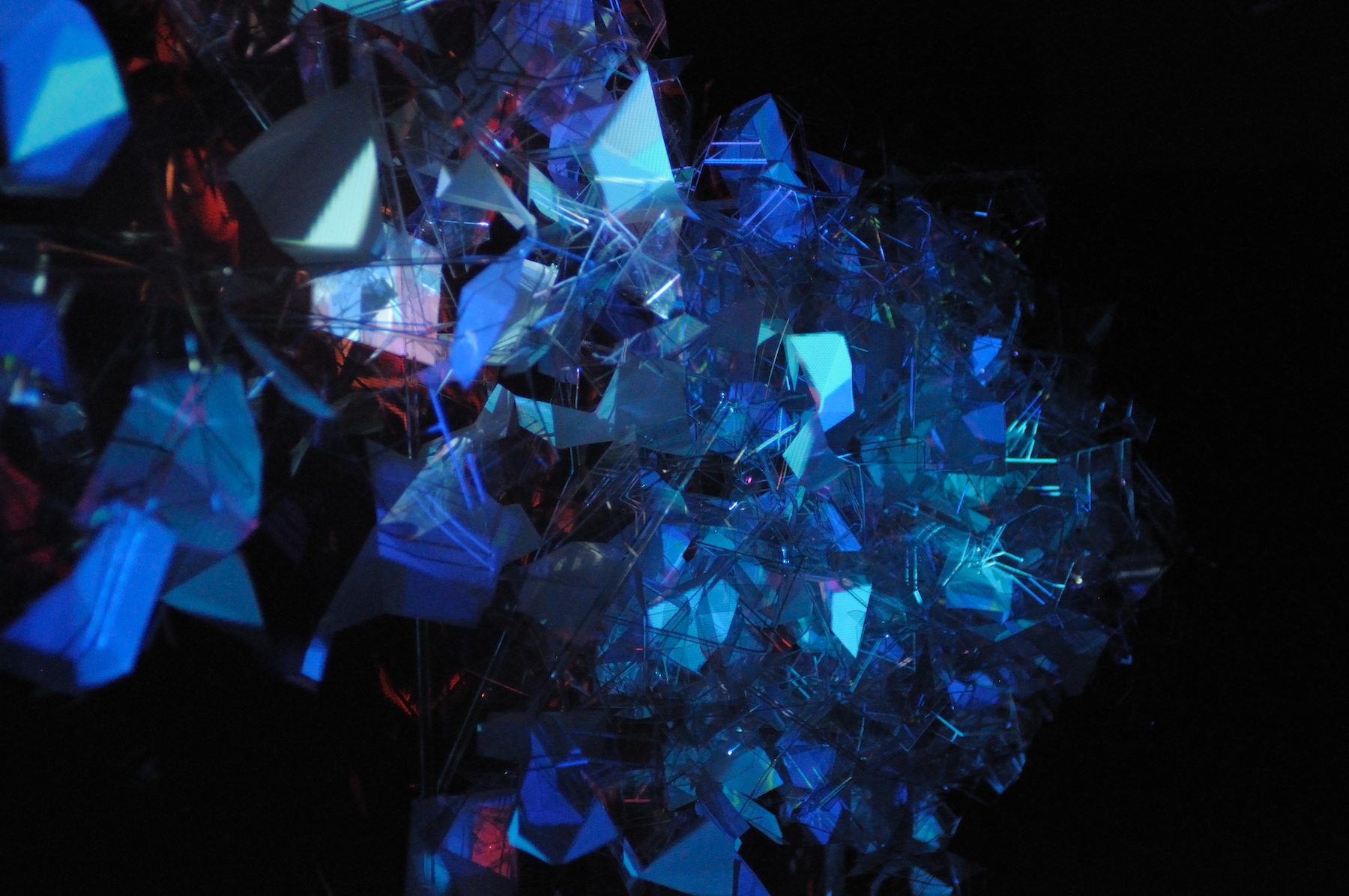

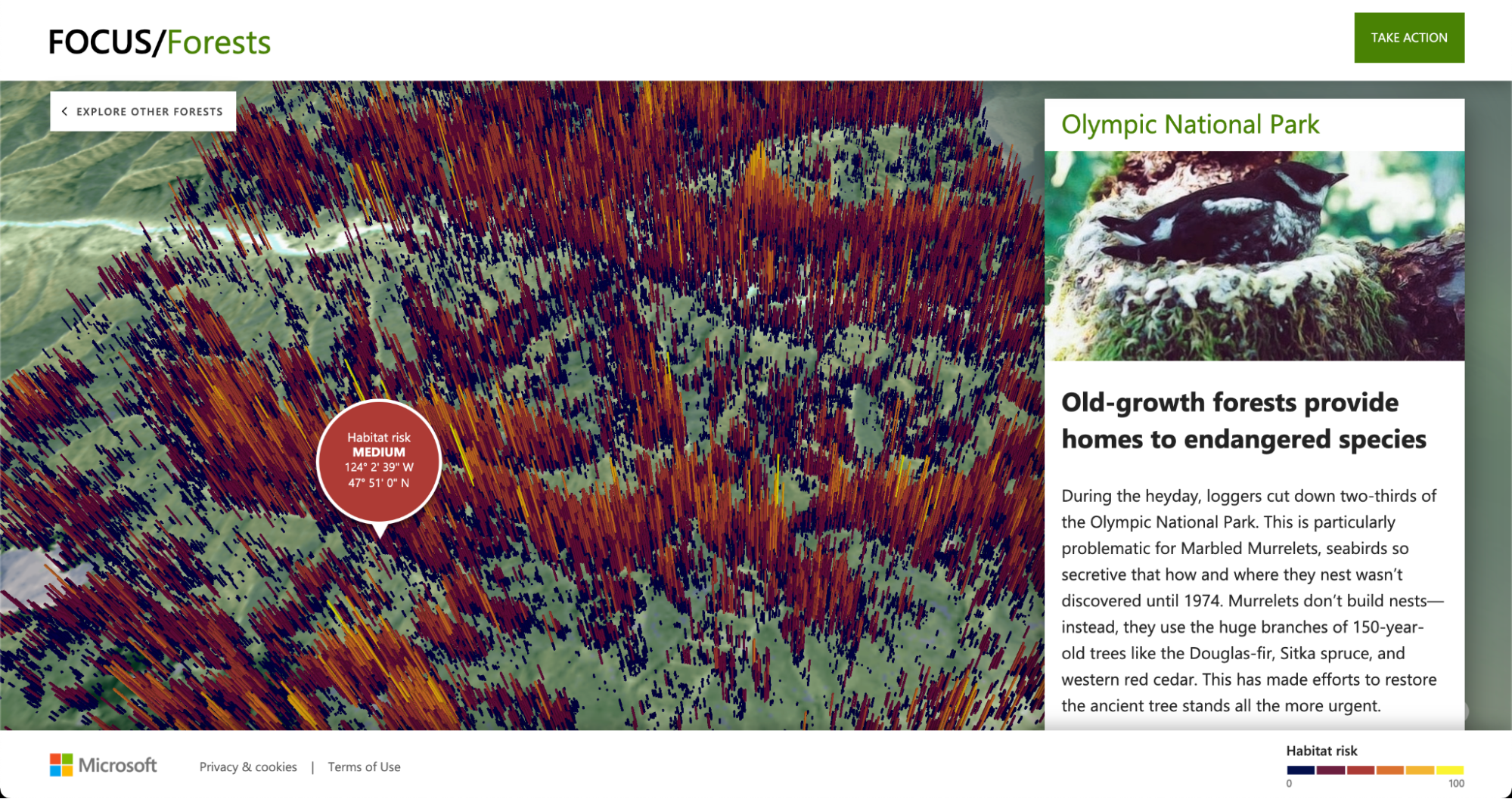

When first getting hired on at Süperfad I created a set of custom

software for media artist Gary Hill’s 2012 work Writing Corpora.

The new work was created for the international group exhibition

Active Presence: Action, Object and Public, which debuted at the

Museo MARCO in Vigo, Galacia (Spain) during February of this year.

The exhibition, curated by Sergio Edelsztein and Kathleen Forde,

focused on artists whose practice contains both performance and

installation elements. The overall gist of Writing Corpora,

without paraphrasing the artist too much, is physical gestures

triggering text, video and audio relating to idiomatic phrases

that refer to the human body (i.e. “put your foot in your mouth”),

in both English and, in this version, Galician (the native tongue

in Vigo). The piece is a continuation of the artist’s conceptual

work focused on the convergence of body and language utilizing our

current era’s ever evolving new technologies for self-expression.

For this project Süperfad provided technical support and code

development, creating a software framework that allowed the artist

to play back media elements throughout the gallery space with no

physical controller other than the performers movements. The

result is a full-featured toolkit that can trigger any audio

and/or video media with practically any body pose or gesture.

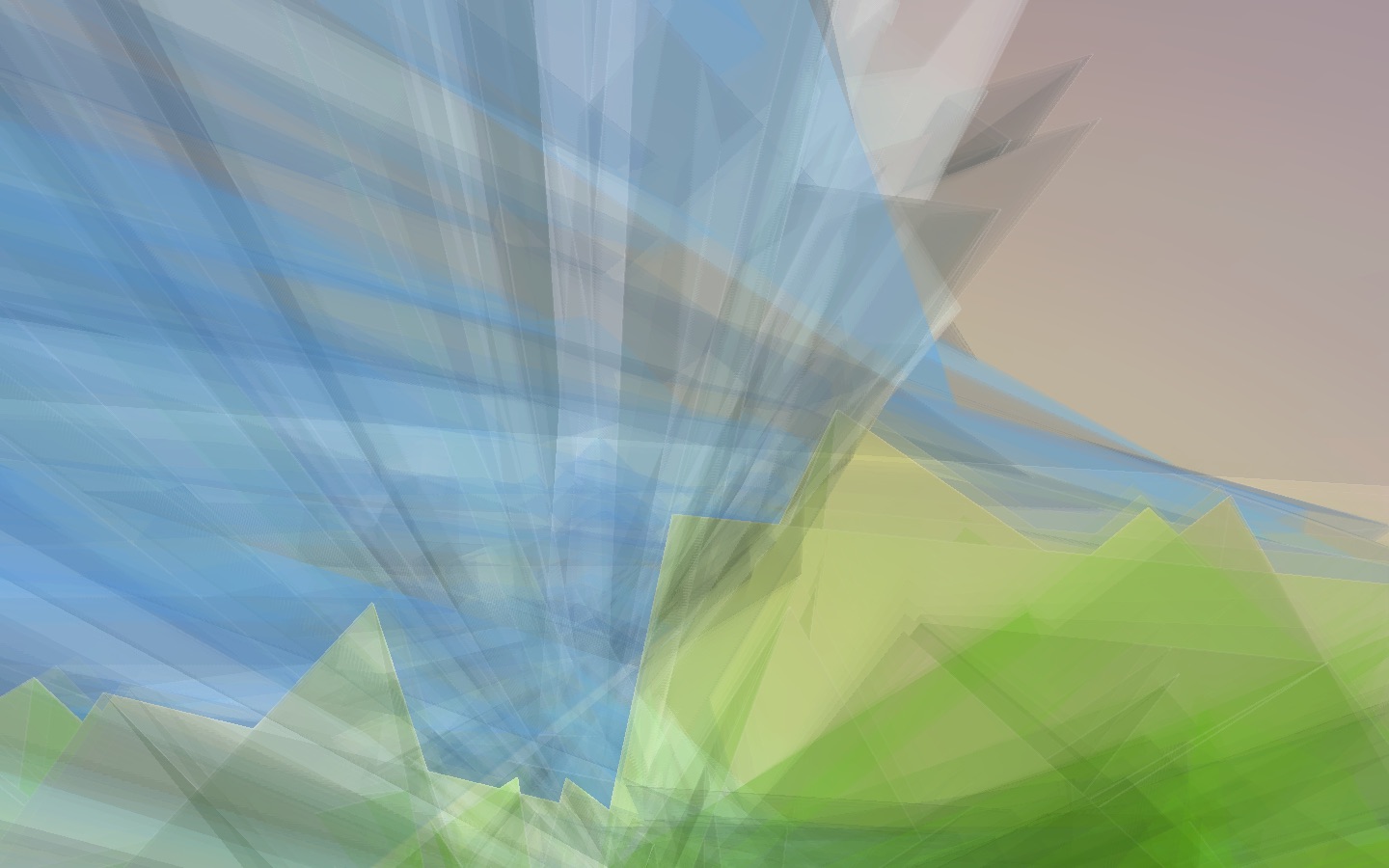

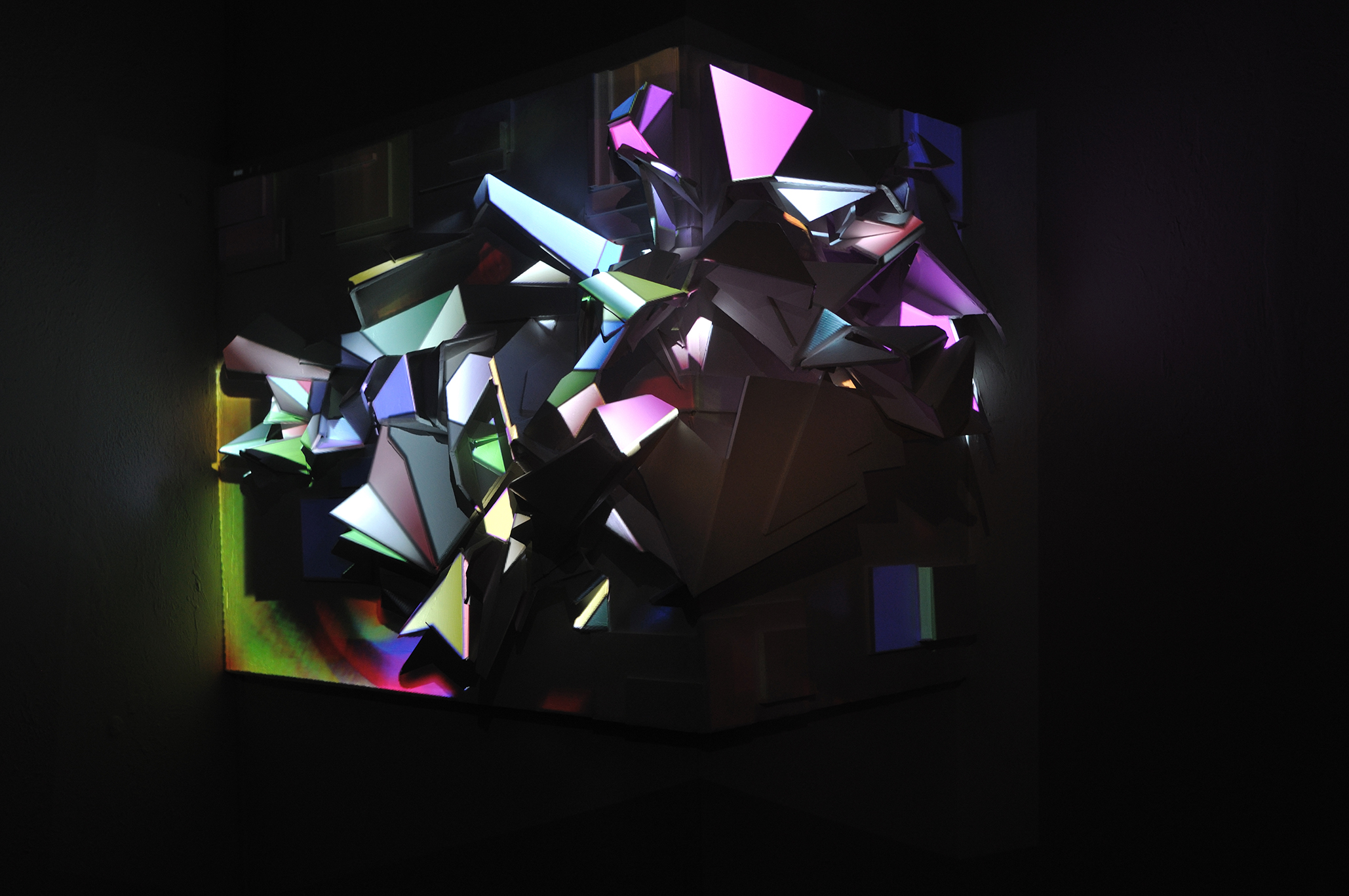

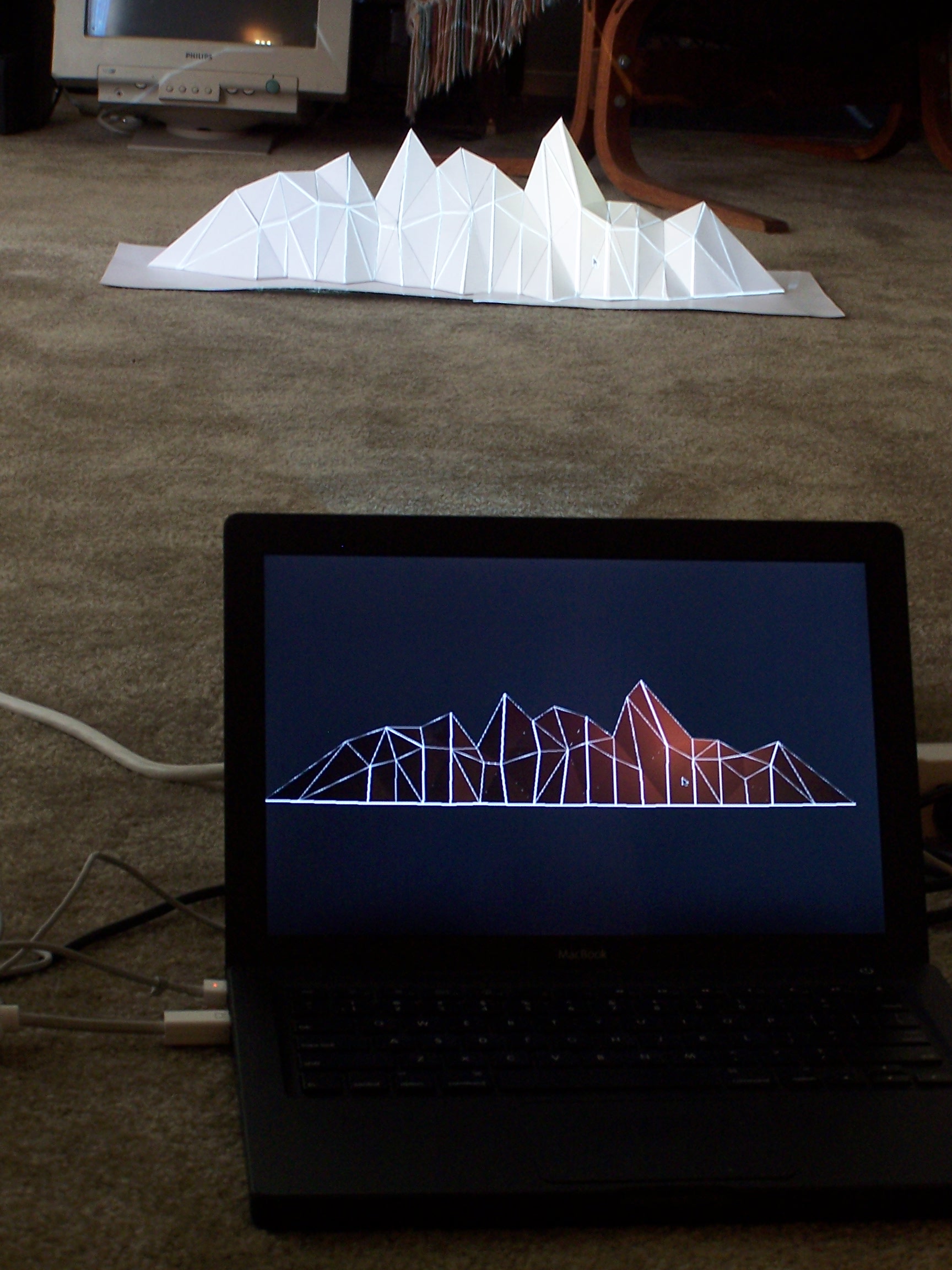

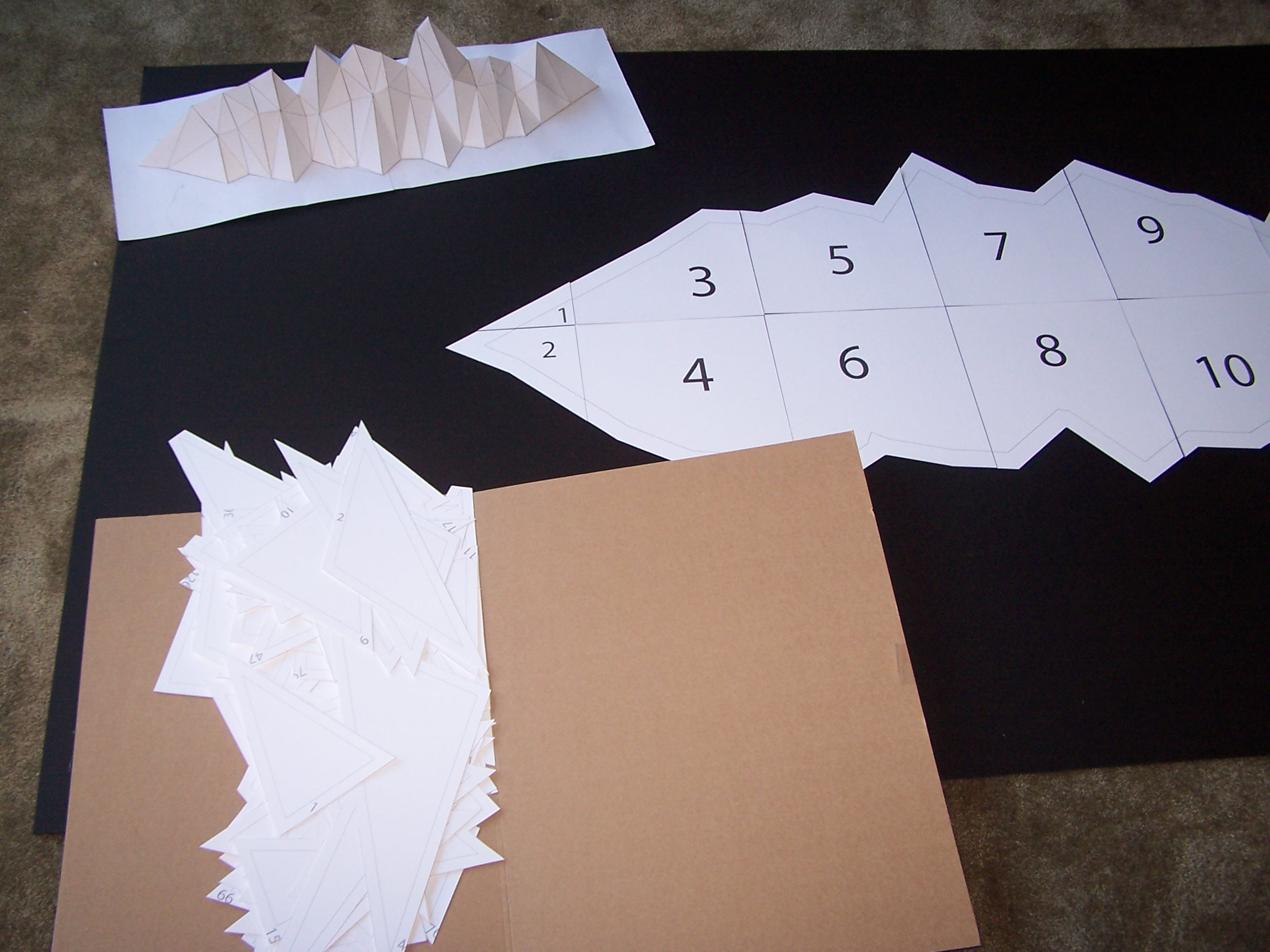

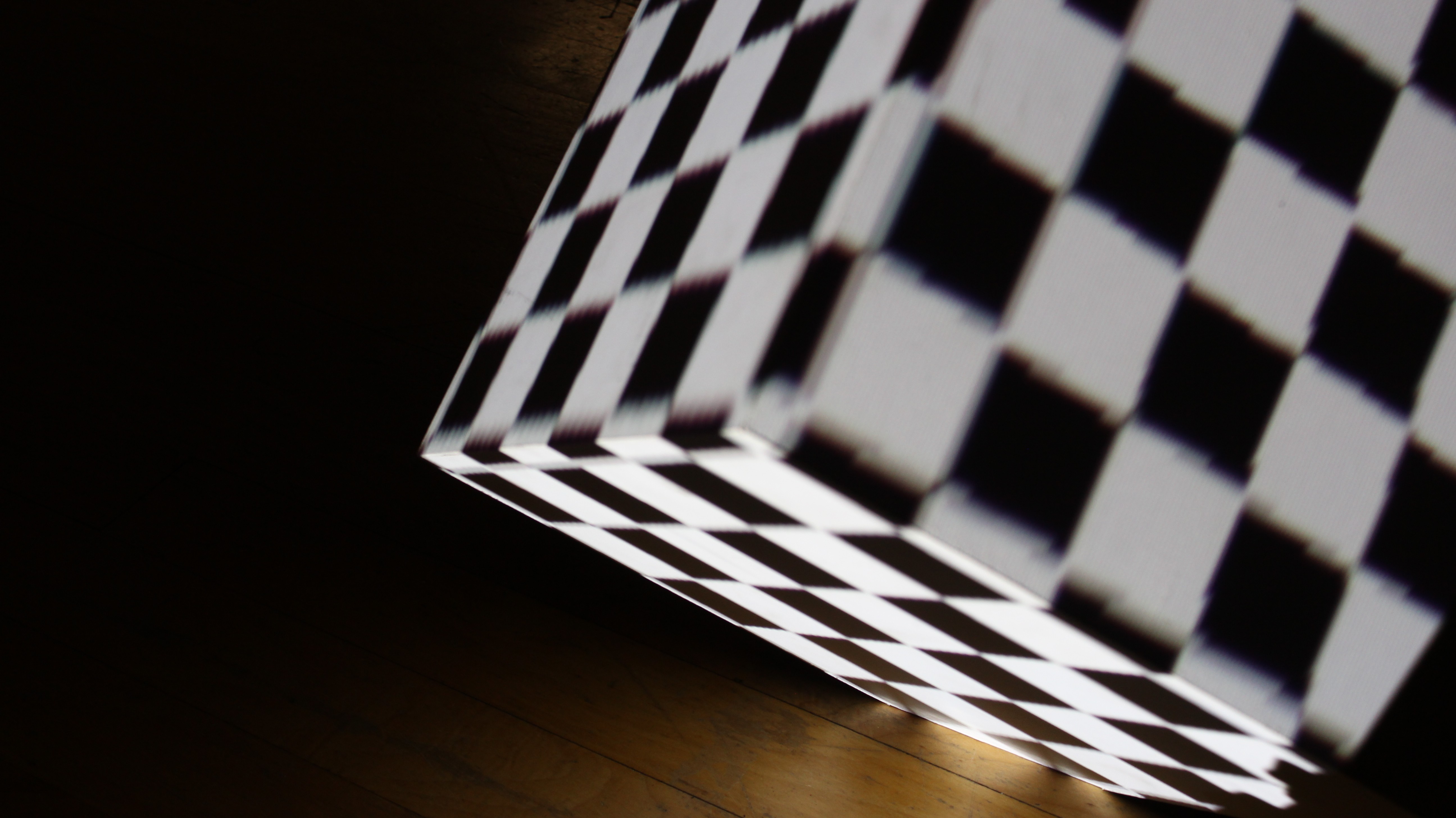

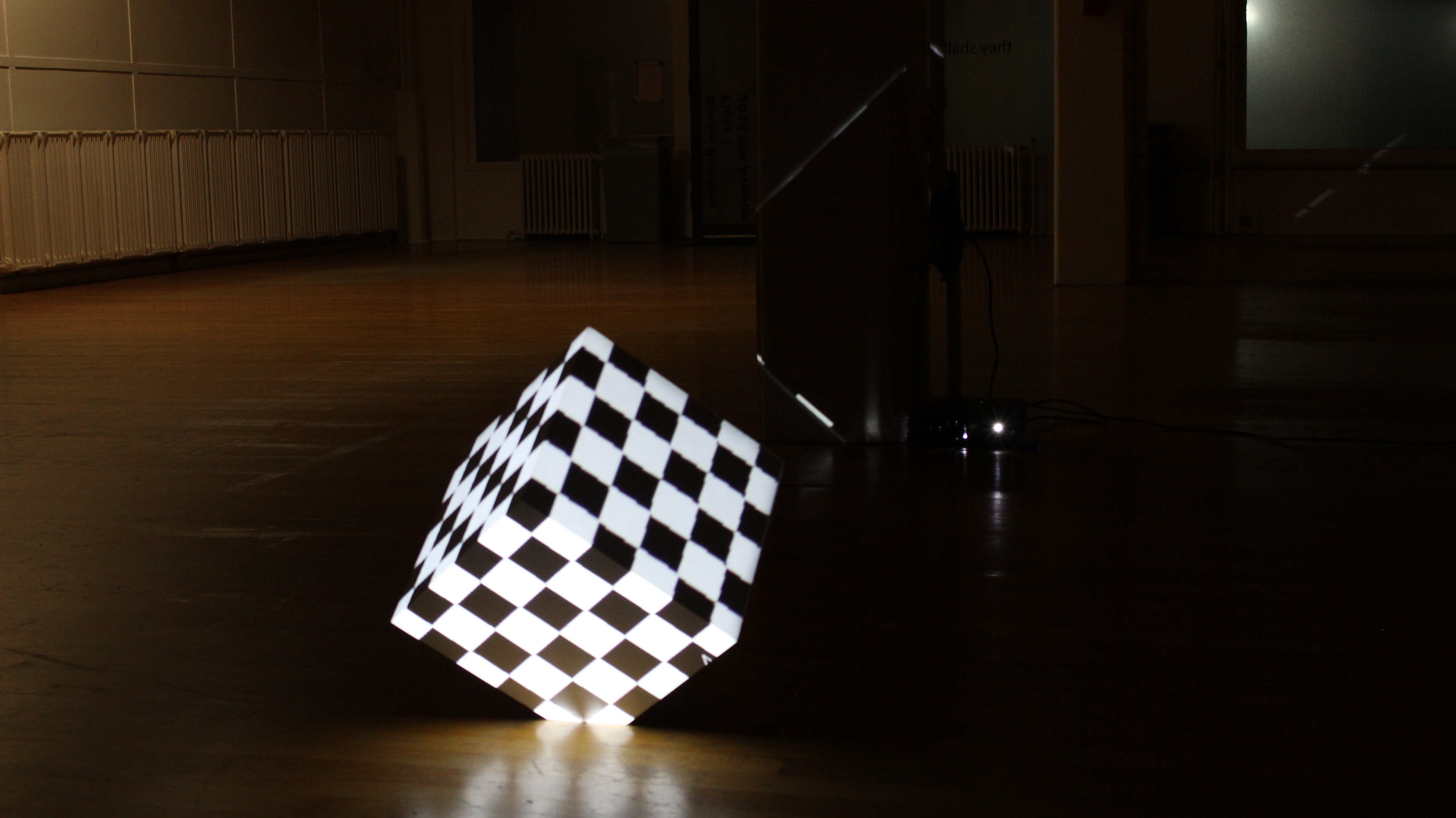

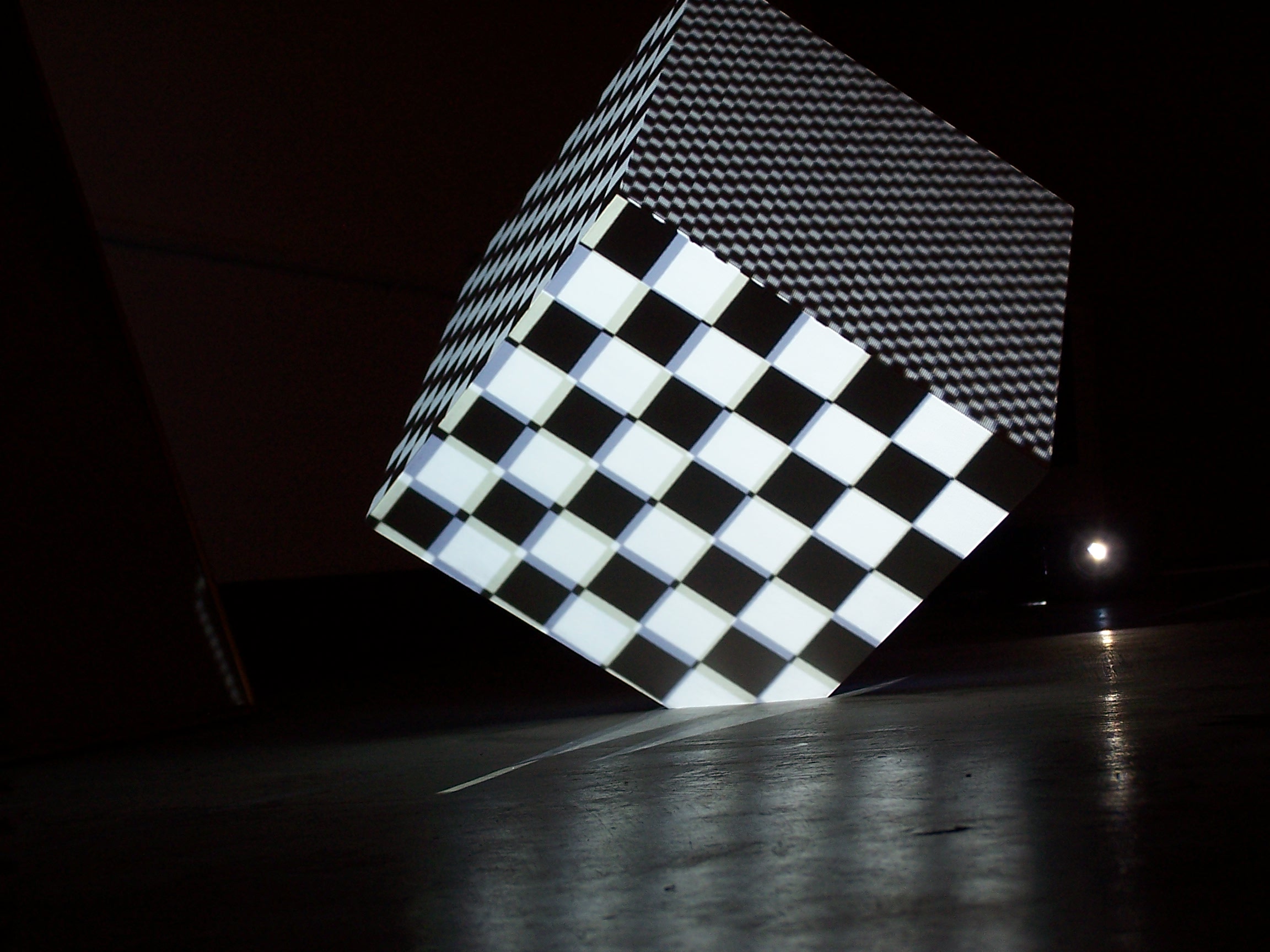

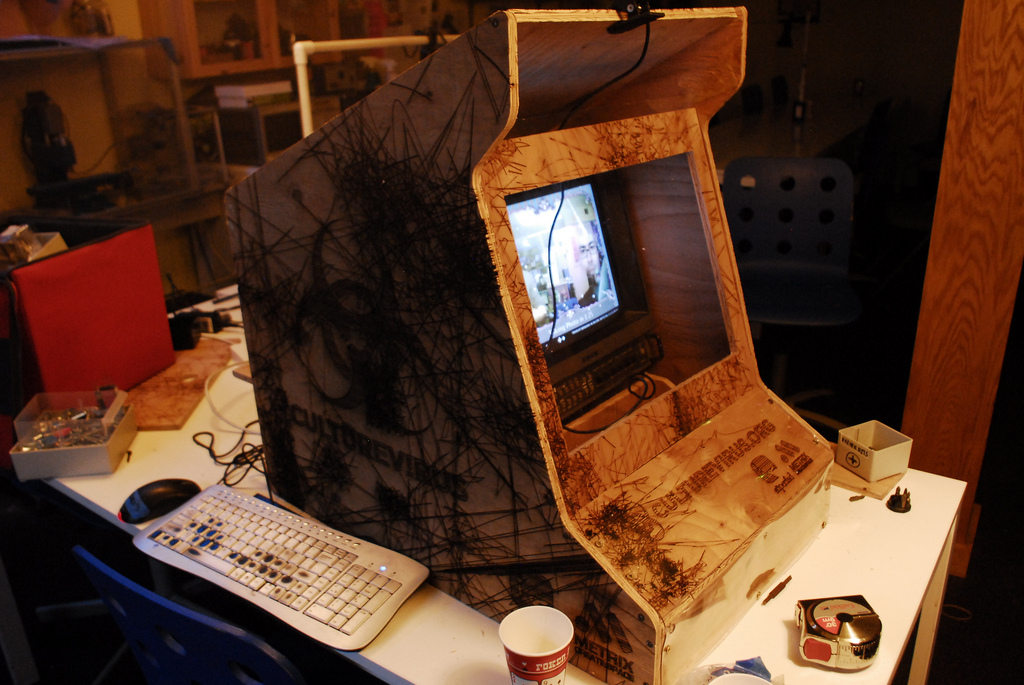

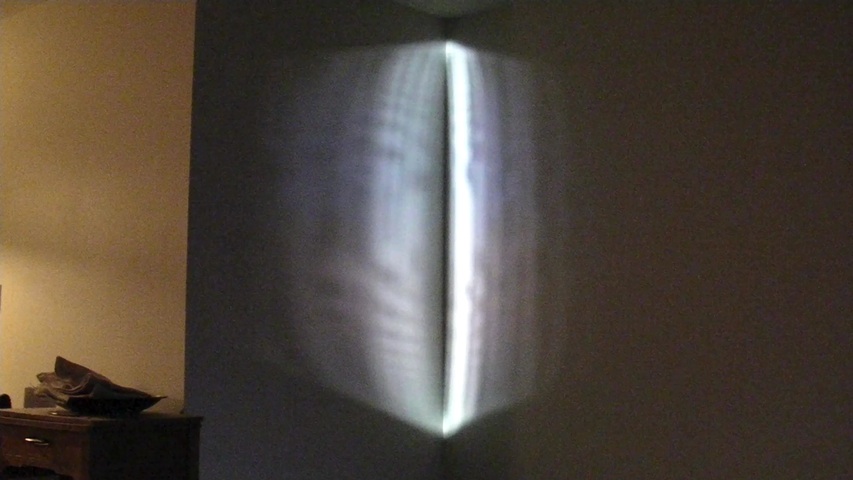

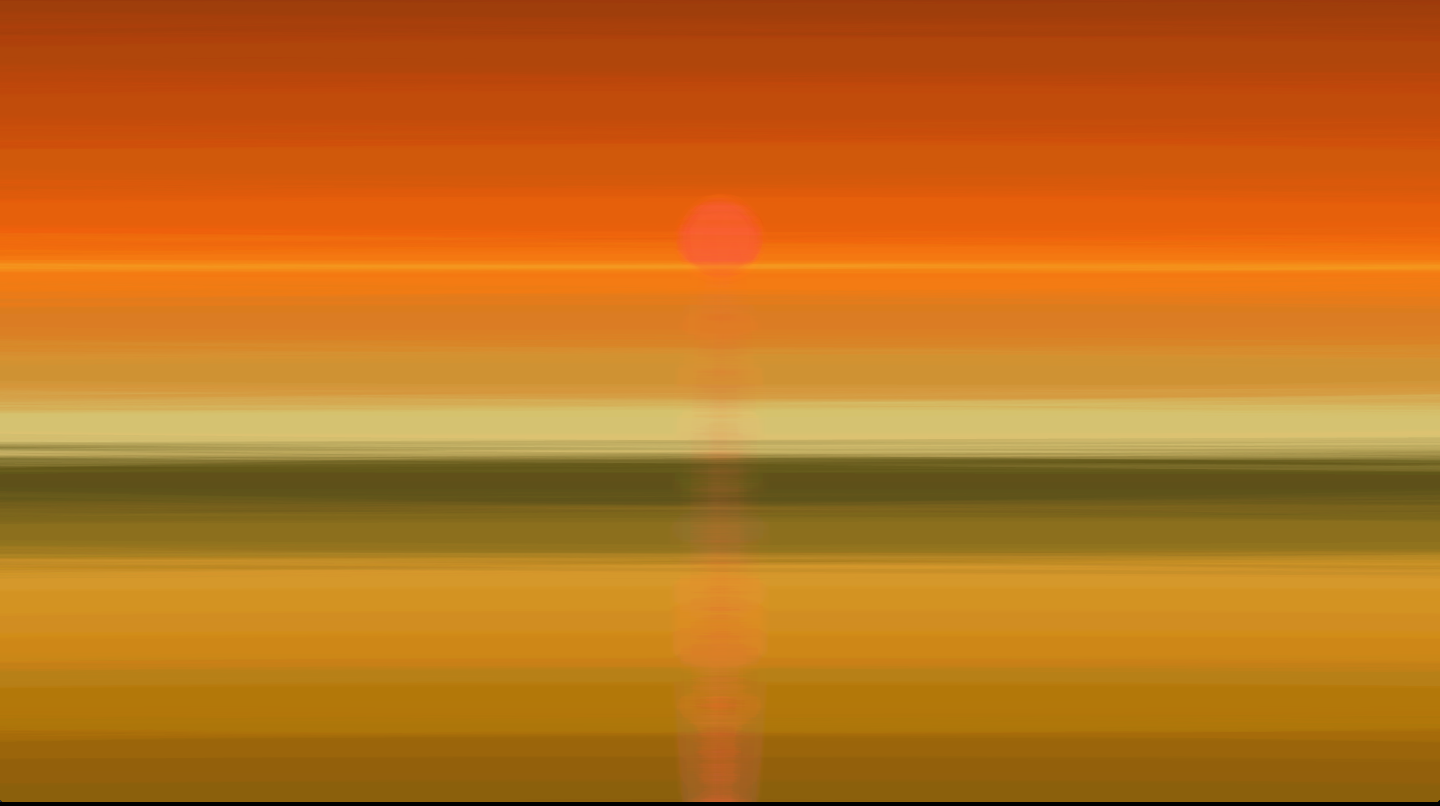

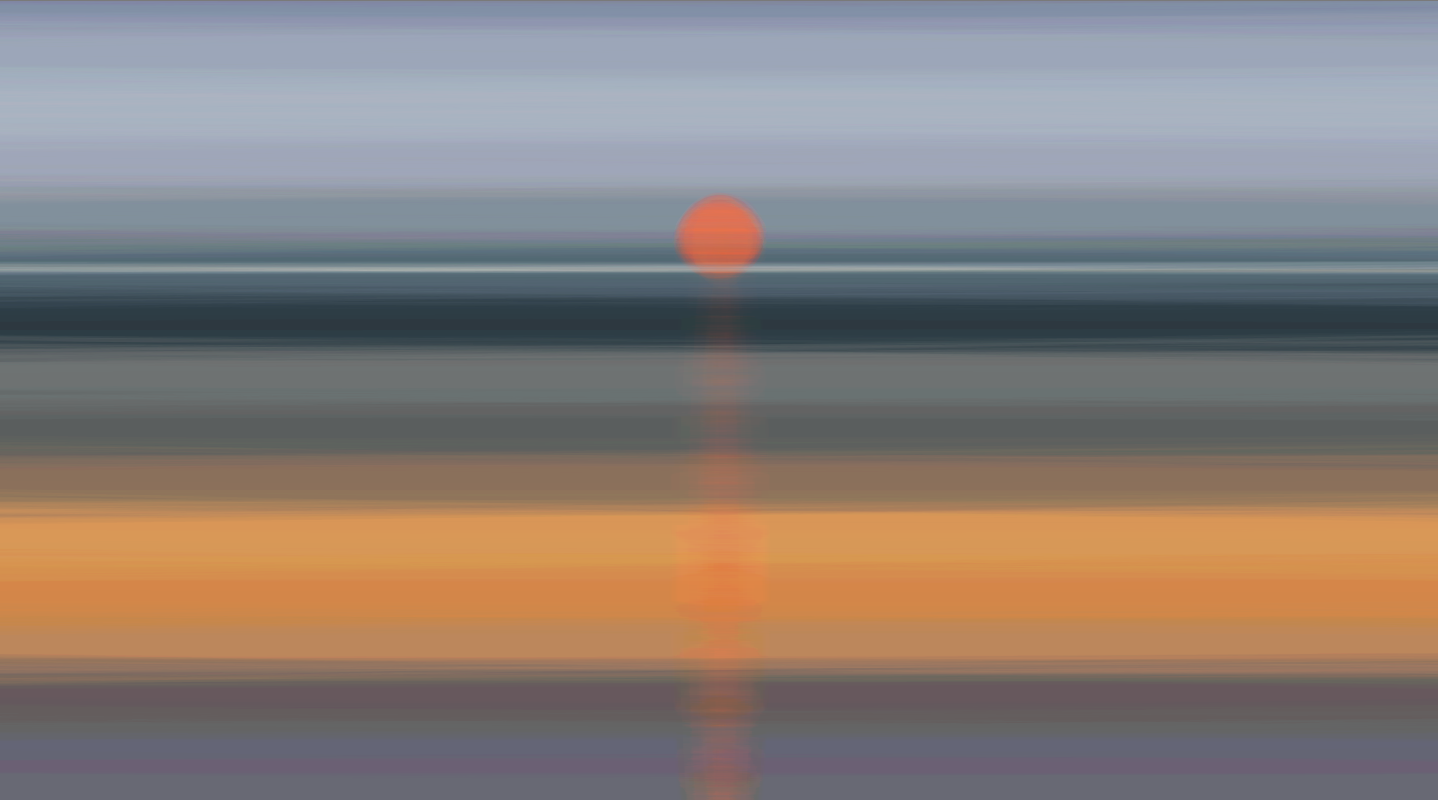

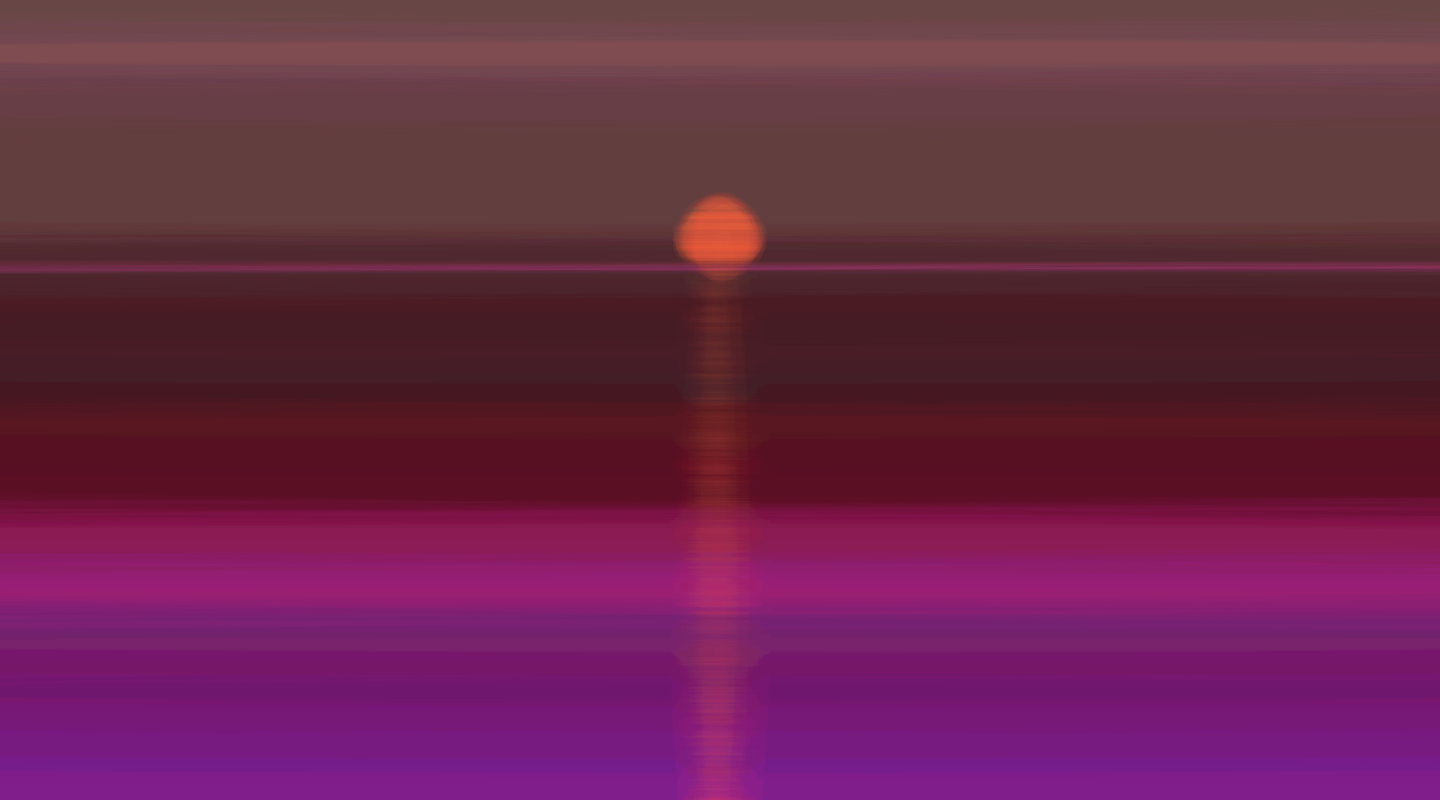

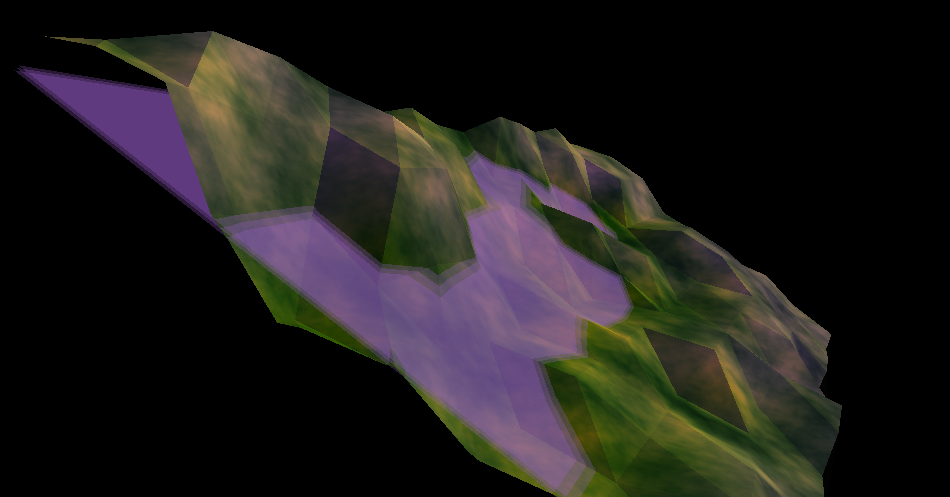

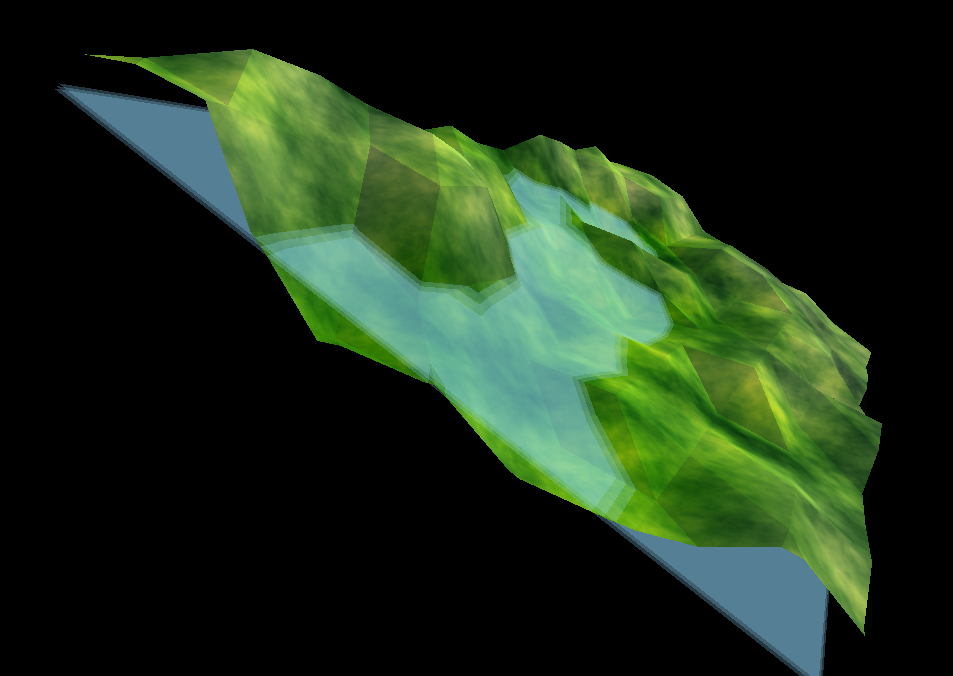

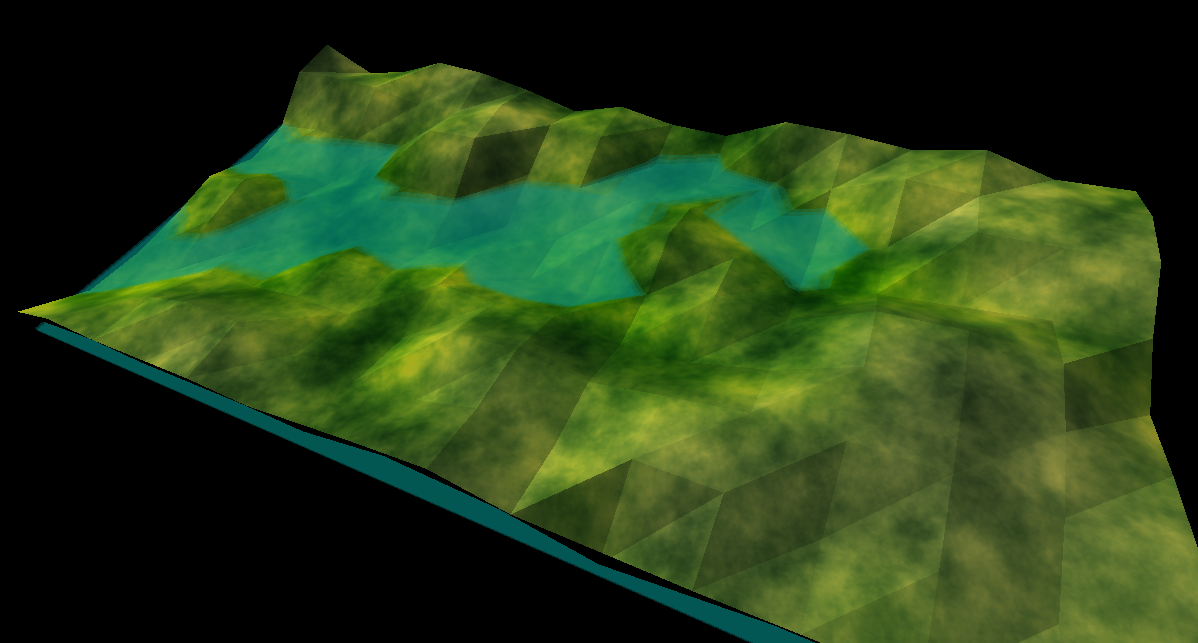

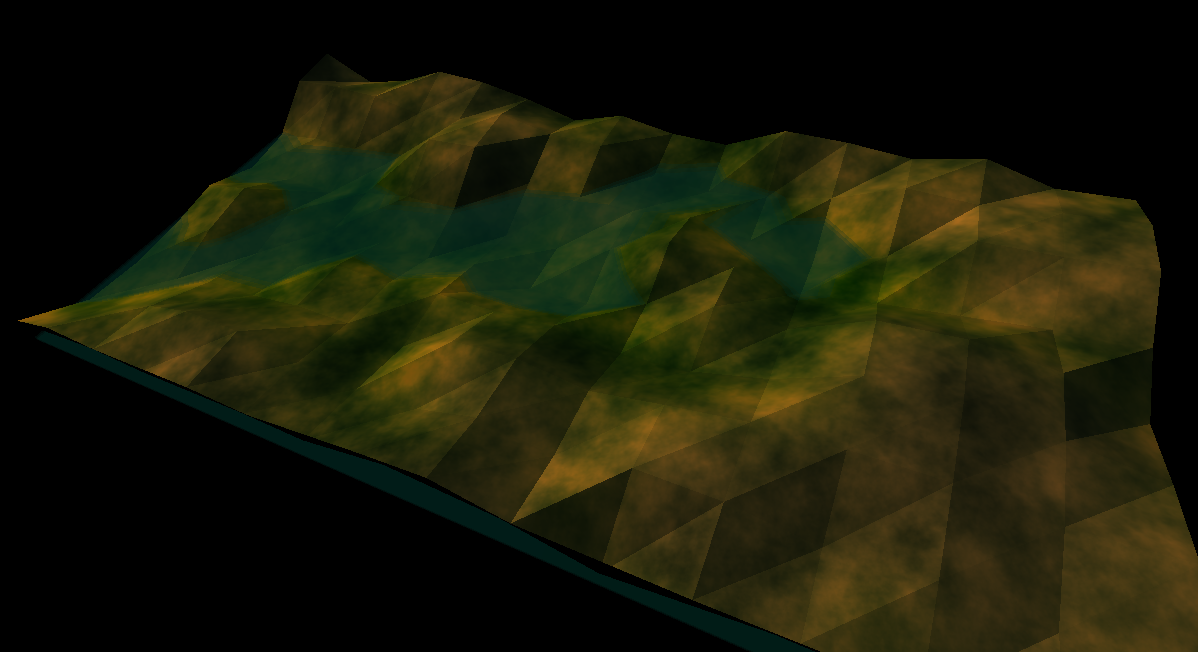

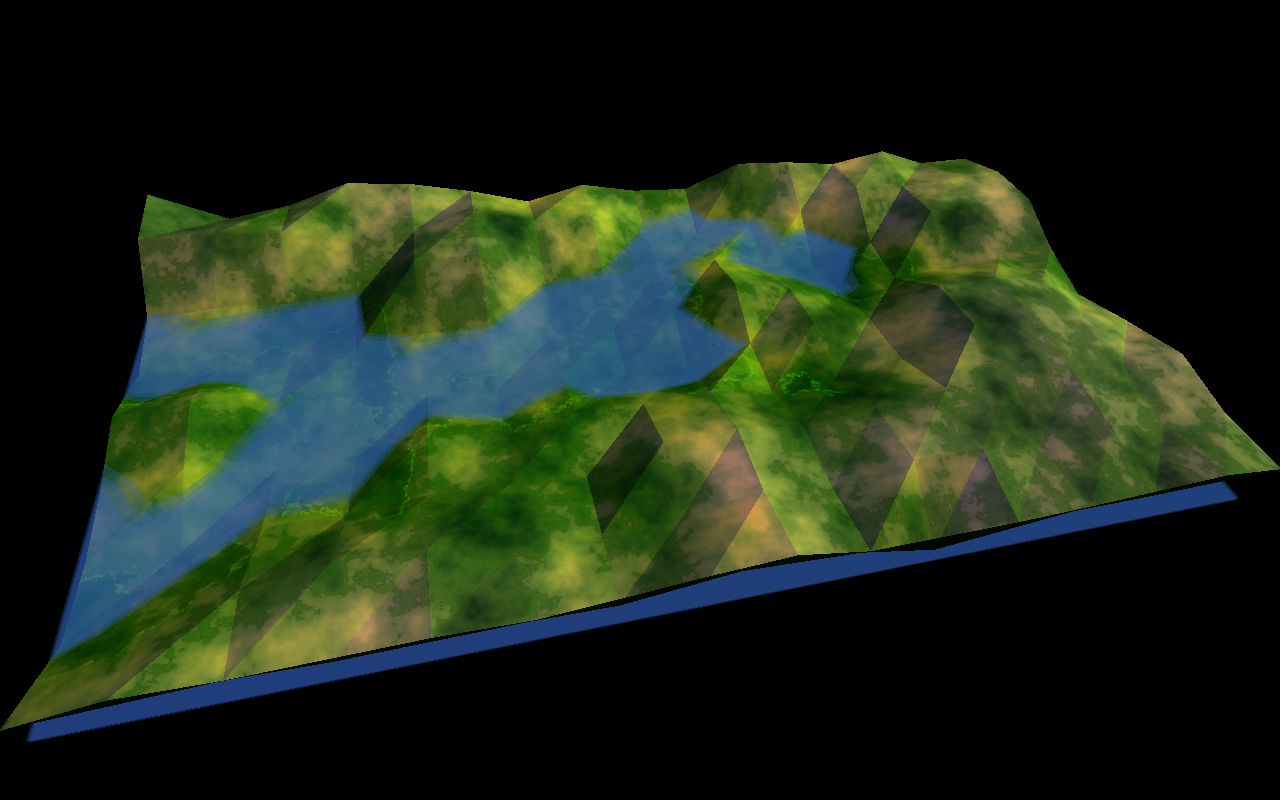

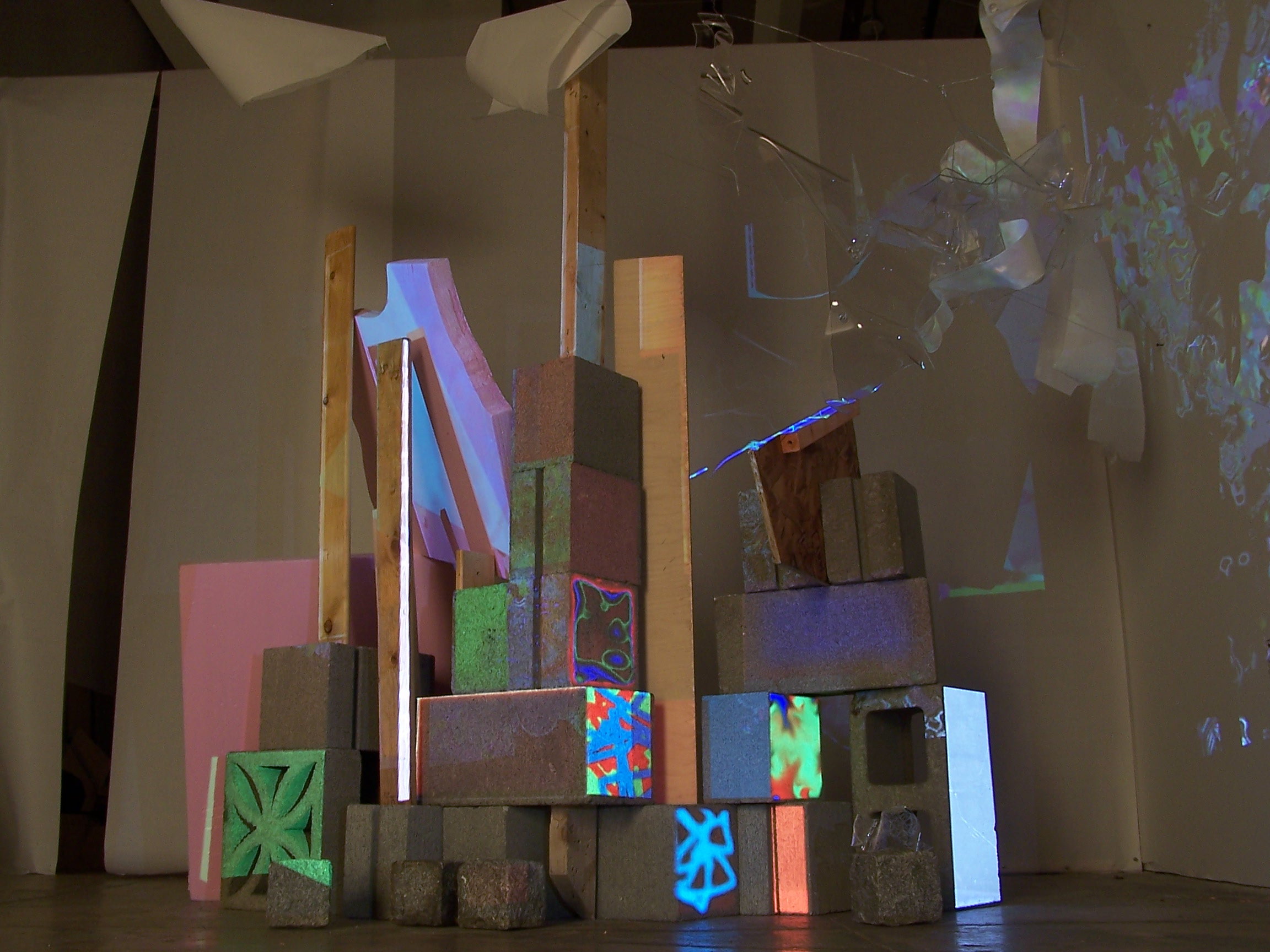

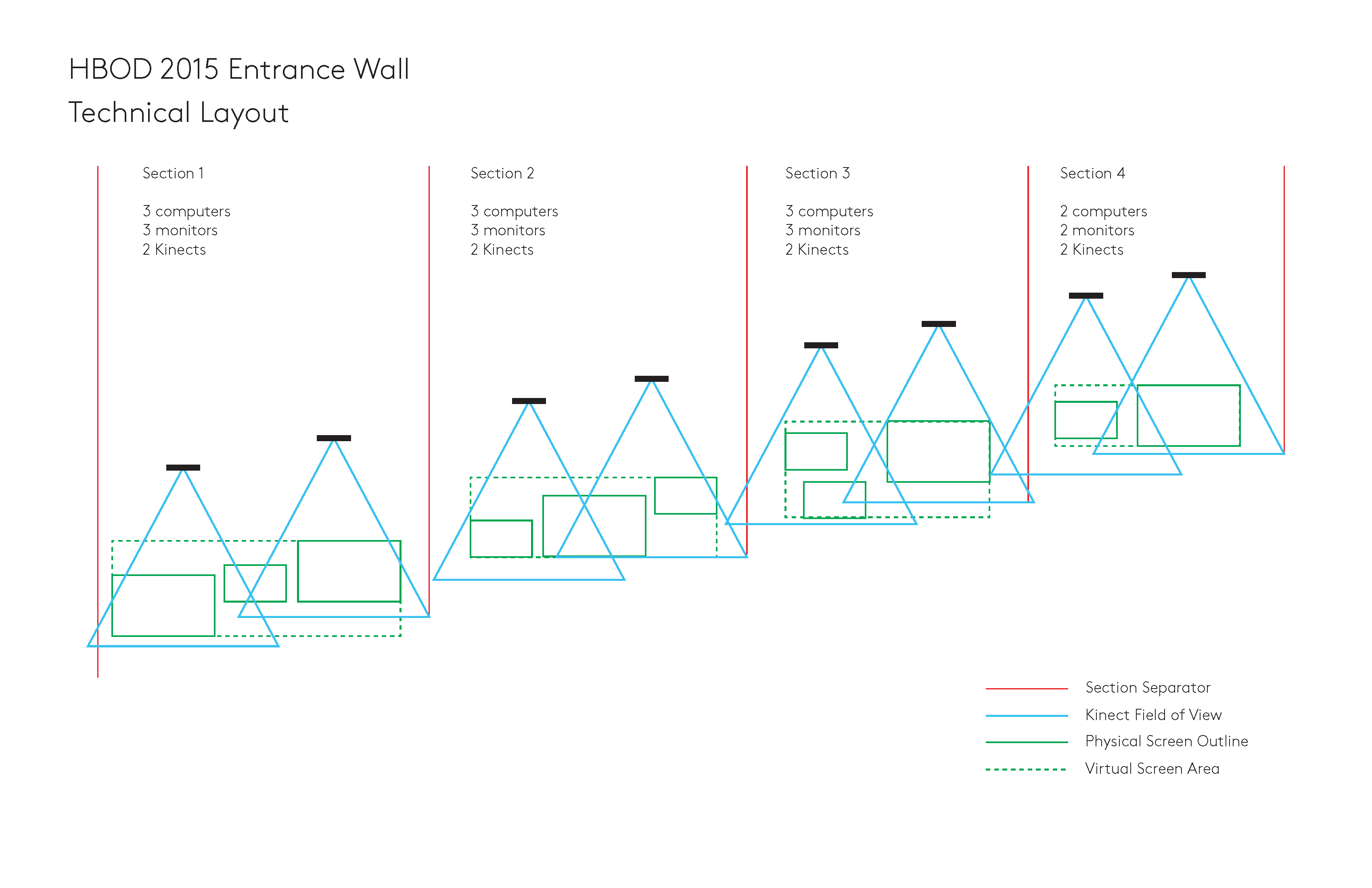

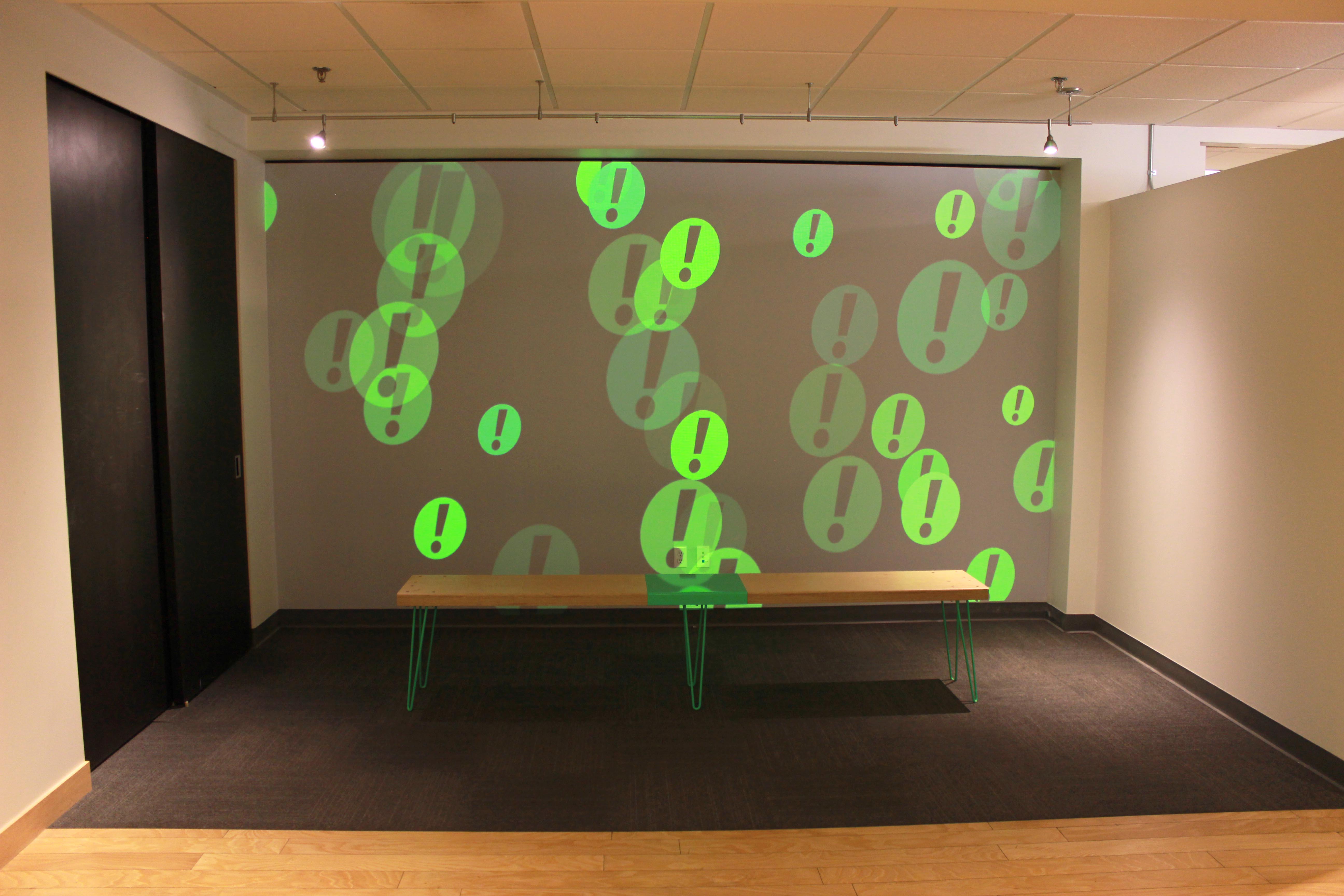

Overview We created two systems of custom software for Writing

Corpora. The first was a fluid-dynamics “touch-floor”, an alphabet

soup of letters that congeal into legible phrases and words when

certain regions of the floor projection are stepped on, which is

also an interactive element that remains for current and future

gallery attendees. When a phrase formed in the text fluid it also

simultaneously played an audio clip on overhead speakers of the

same idiom being spoken in Galician if the text was in English and

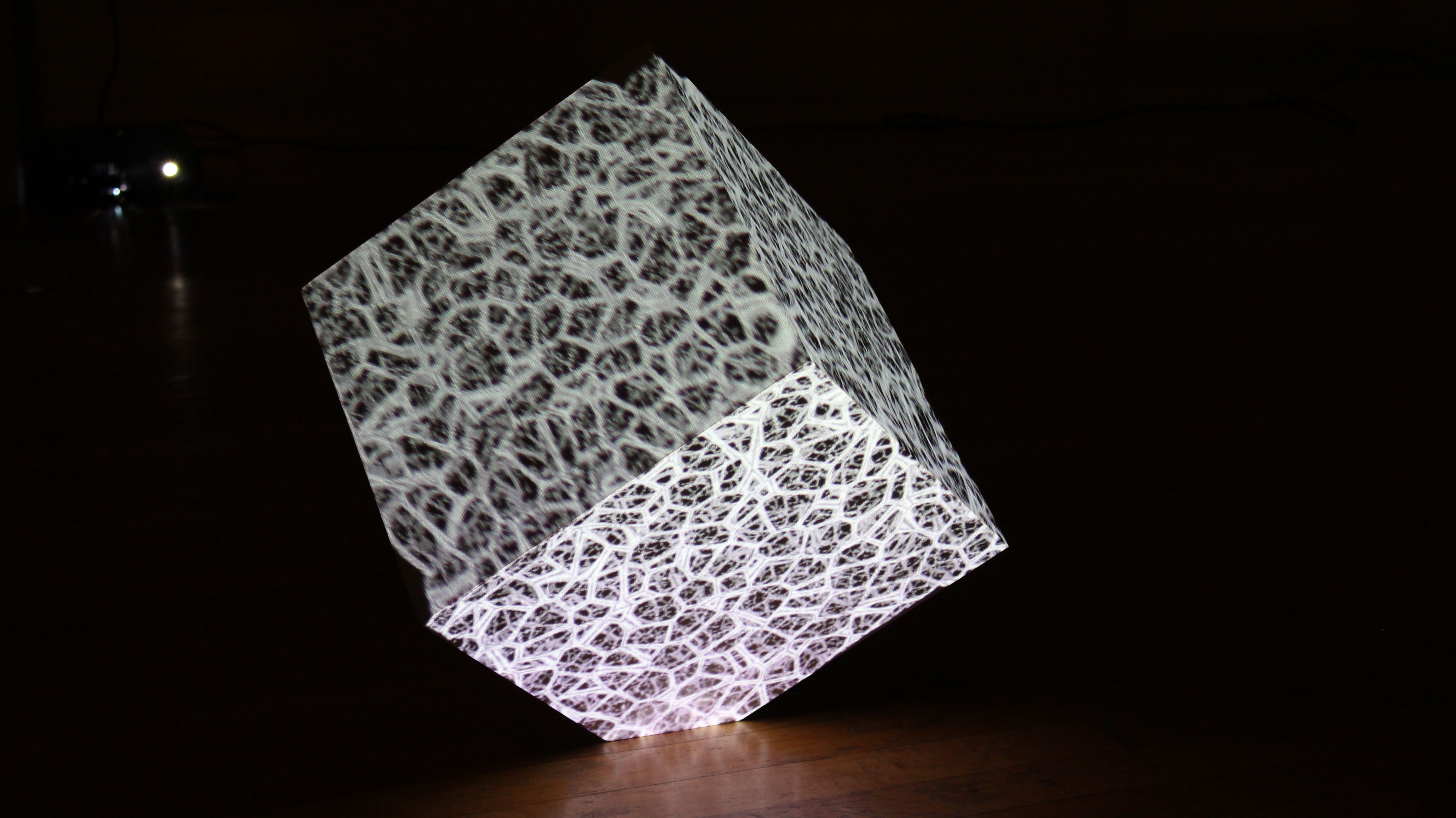

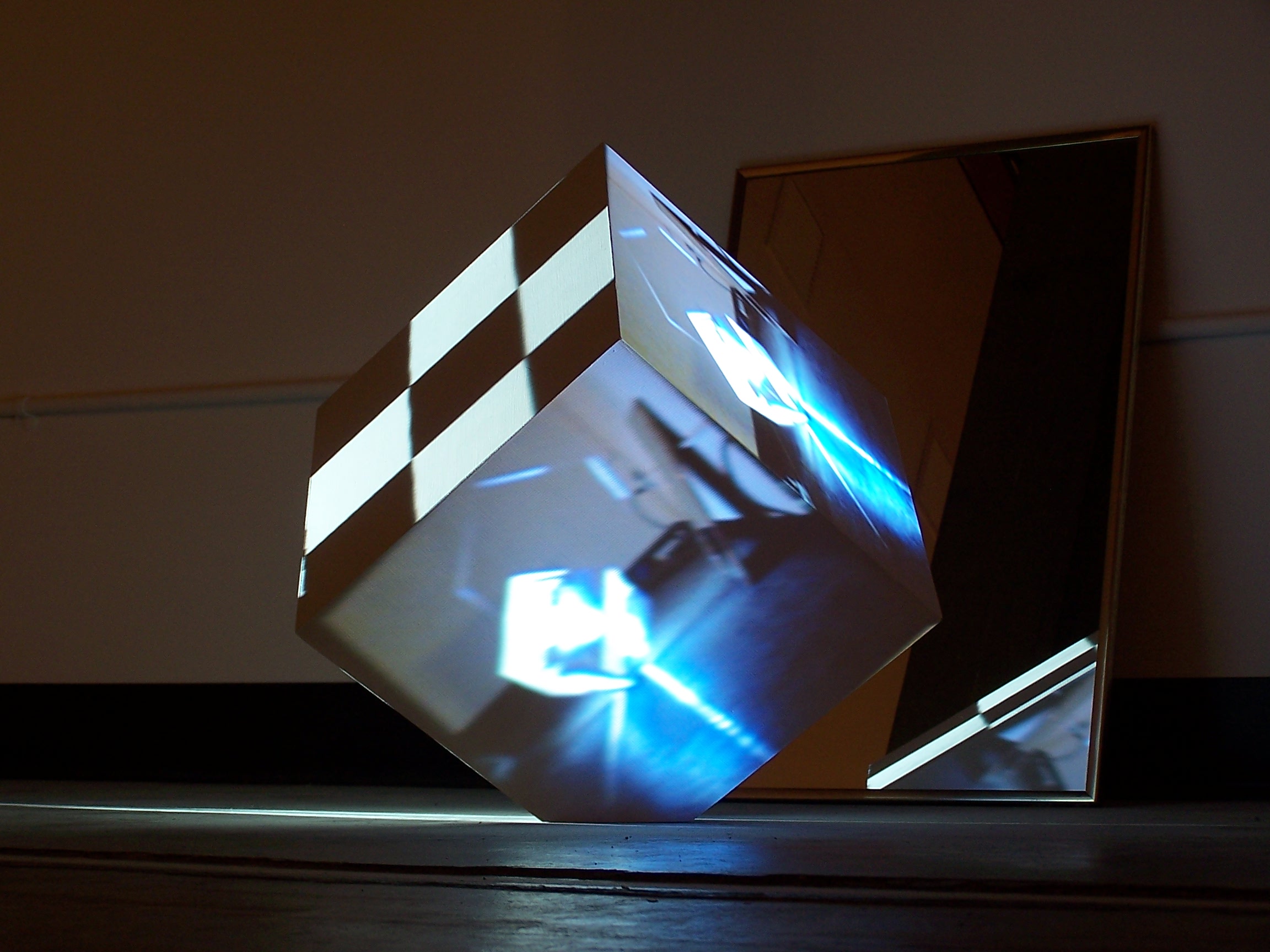

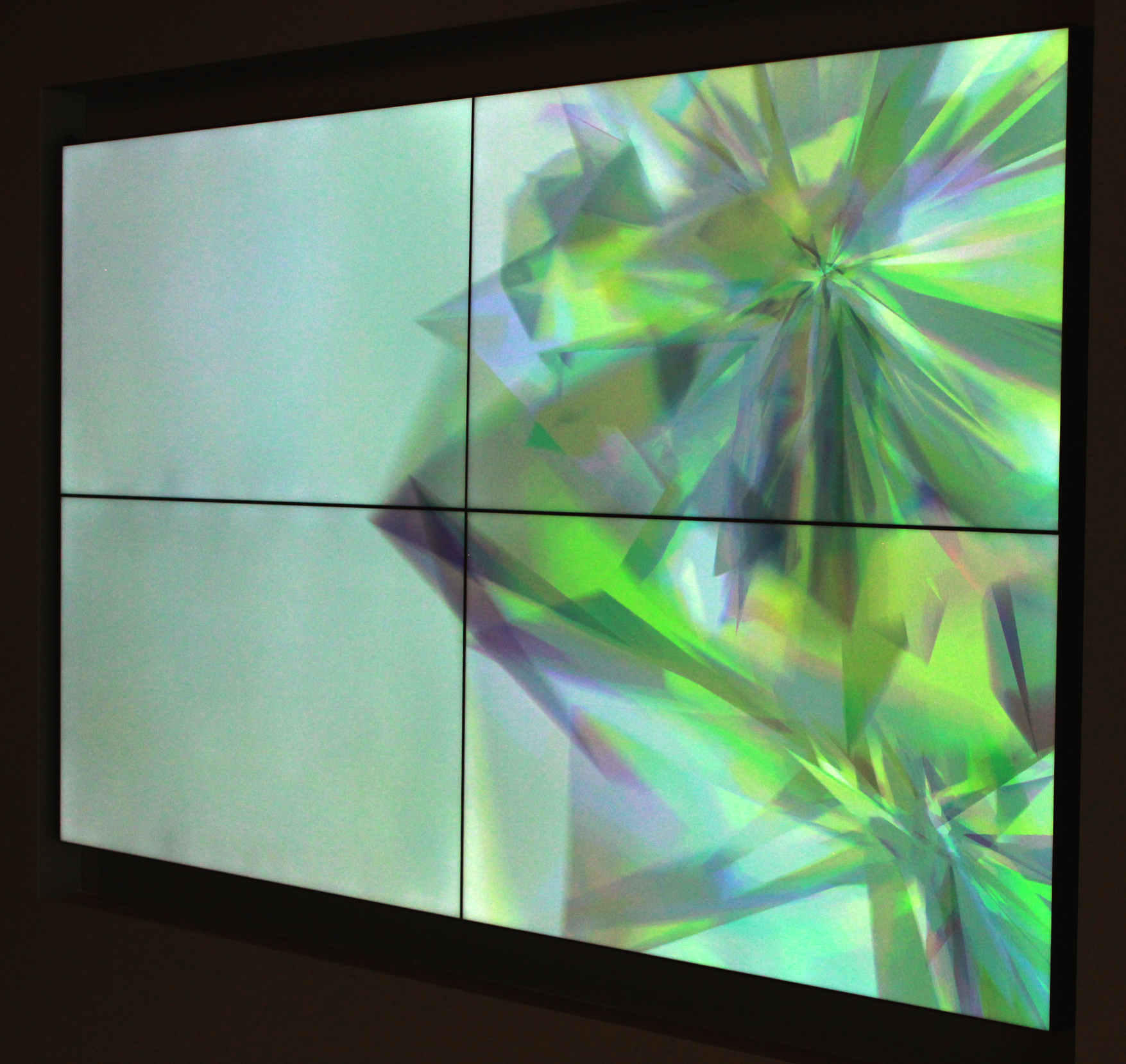

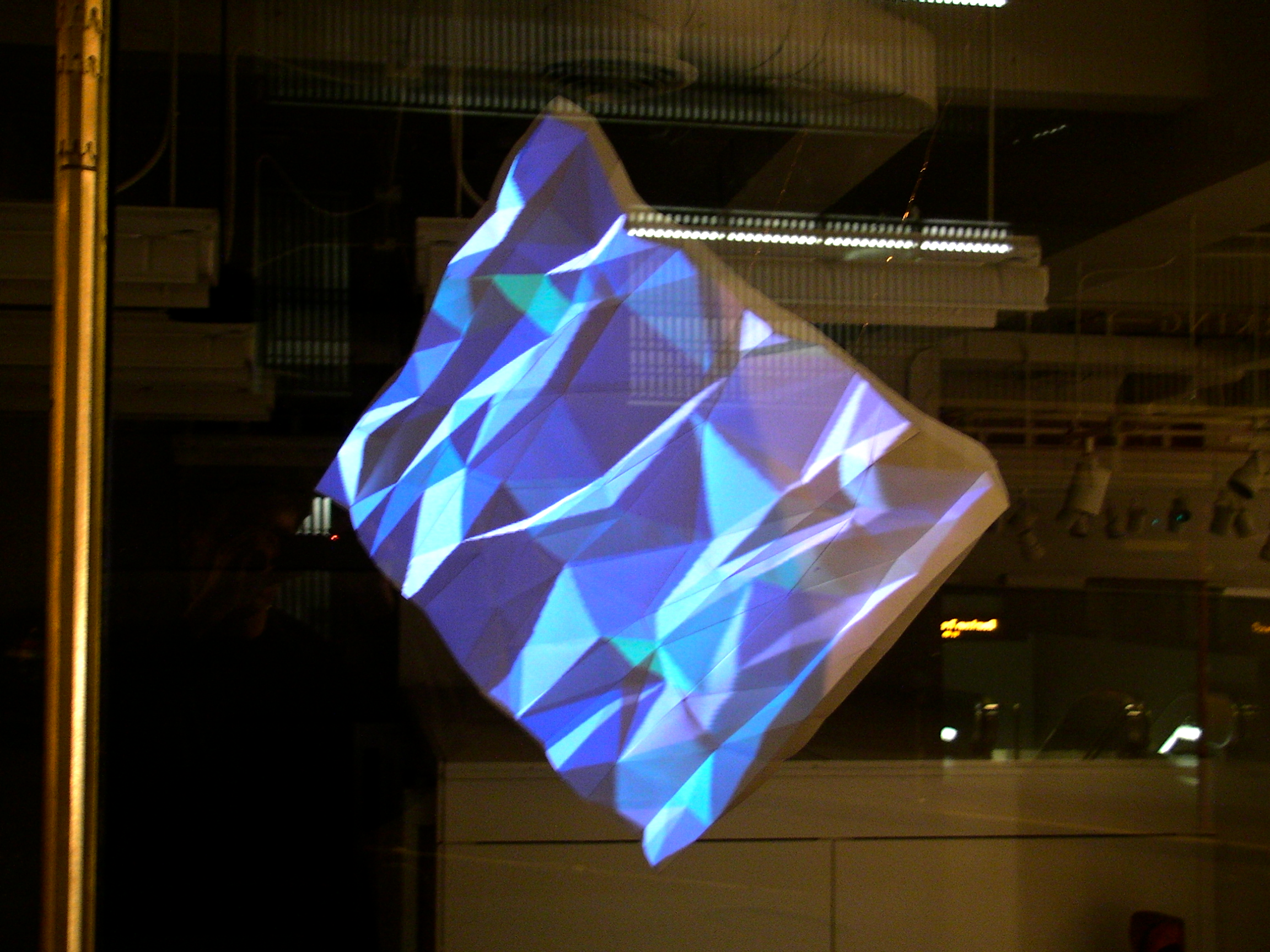

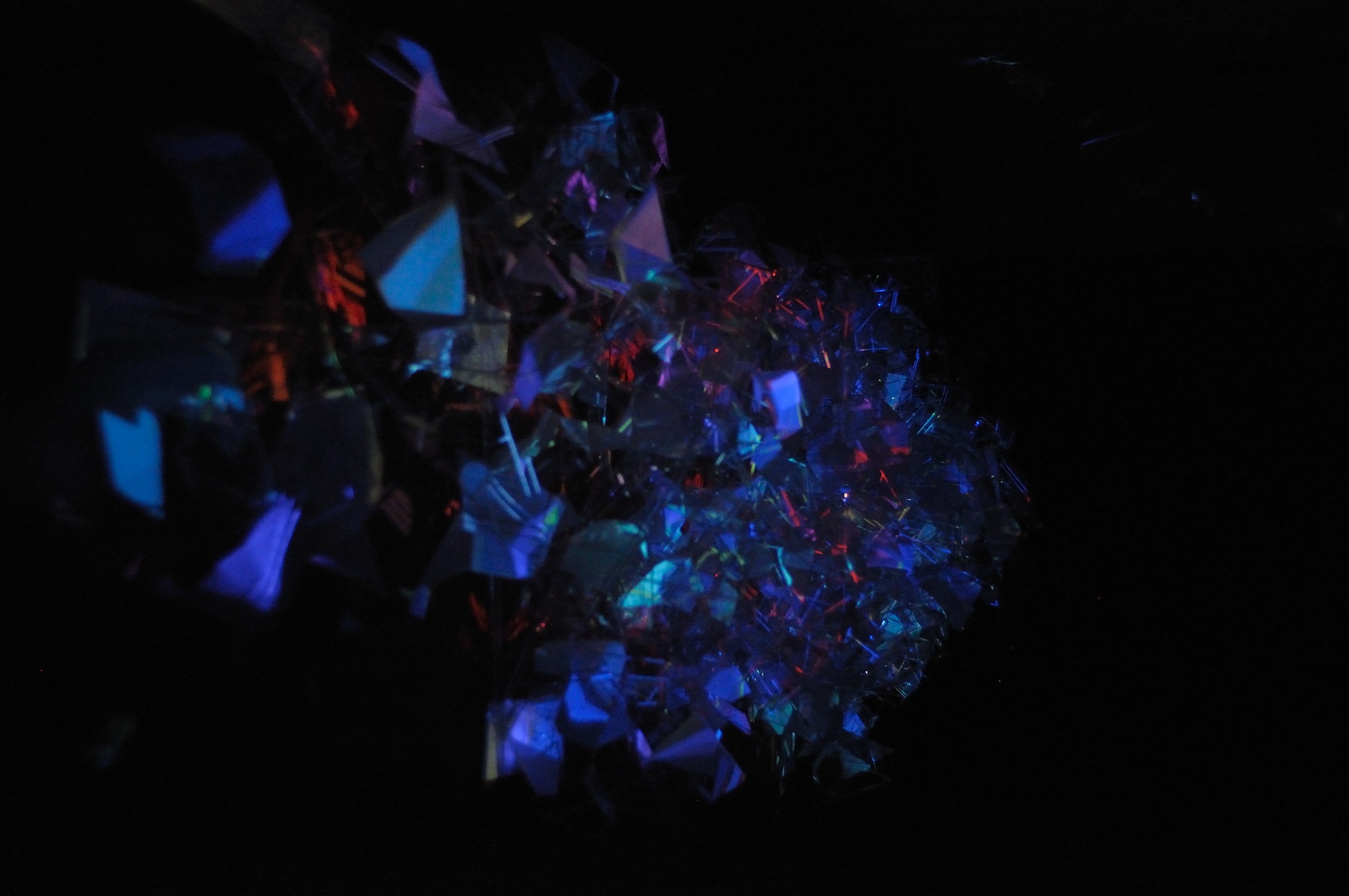

vice-versa. The other software, used only during the performance

by the artist, tracked skeleton data from a Kinect depth camera

for real-time control of audio/visual elements with physical body

gestures and movements. This tracked the physical distance between

almost every possible combination of skeletal joints (elbow to

head, foot to torso, knee to neck … you name it) and played back

specific audio and/or video clips on one of three projectors in

the room while a forth displayed this tangle of gesture data

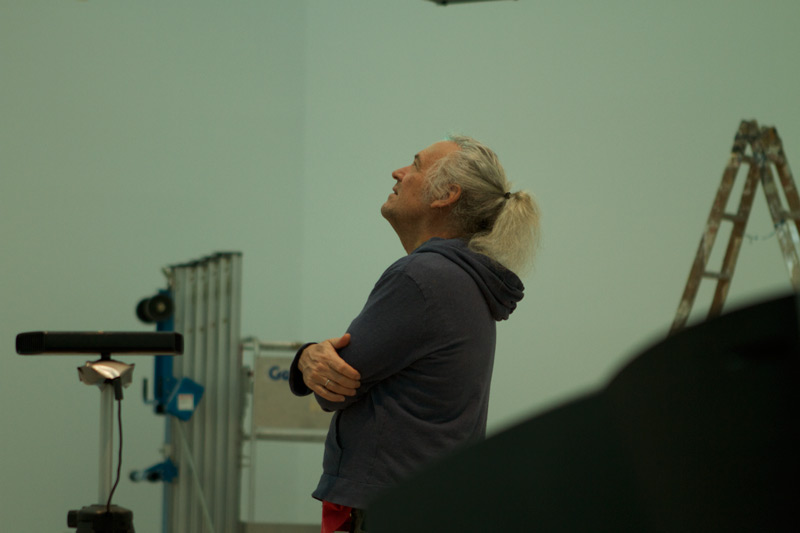

overlaid on the user’s tracked skeleton. Process I was contacted

by Gary’s studio assistant Reilly Donovan in late December to

write custom code to fulfill the artist’s concept as they were

hitting barriers with pre-built software. During this time

Süperfad was bringing me in as their lead creative developer, and

this project came with me. Süperfad founder and director Will Hyde

turned out to be a fan of Mr. Hill and not only agreed to send me

to Spain to help install the work but also sent along art director

Loren Judah to assist and document the process. Primarily we were

all excited about a collaboration in the realm of “pure art”

guided by Gary Hill’s vision and decades long experience of

creating conceptual works with new electronic mediums and

combining this with Süperfad’s digital tech skills. Reilly had

been experimenting with the Kinect platform, and some of the open

source performance software that has been developed for it, which

lead to their request for custom software to achieve certain

ideas. As we worked together developing the software these

objectives changed, sometimes due to a limitation we found in the

hardware, but also when a new possibility was discovered as we

began to understand the toolkit we were working with more clearly.

We continued developing the system for the MARCO performance right

up until the morning before the exhibition opening, and spent that

afternoon creating video clips (shot and edited by Superfad art

director Loren Judah, on the spot). Hours before showtime we

frantically entered references to the media files in xml notation,

along with gesture definitions, to configure the real-time

applications we’d spent the previous month and half creating.

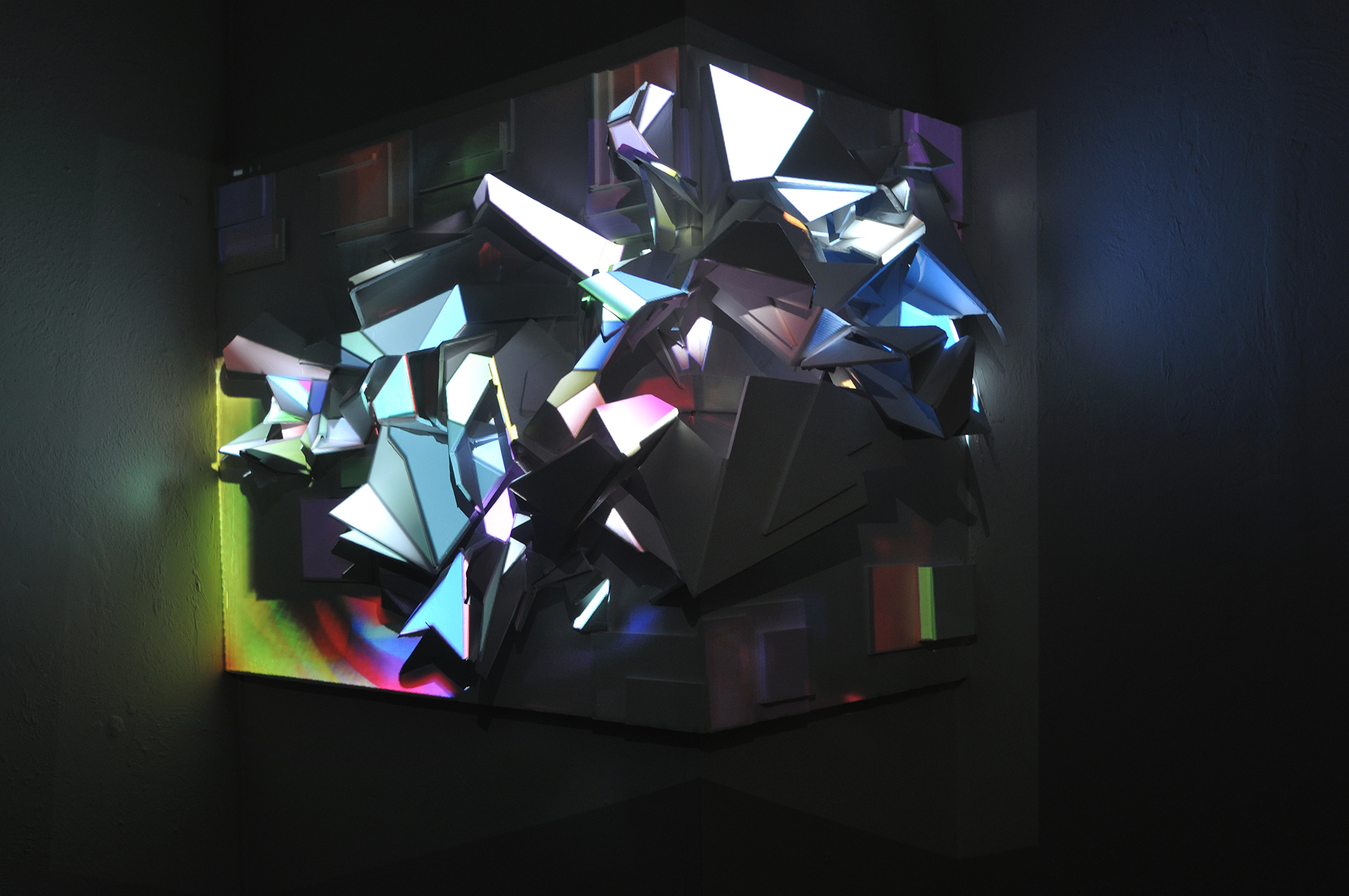

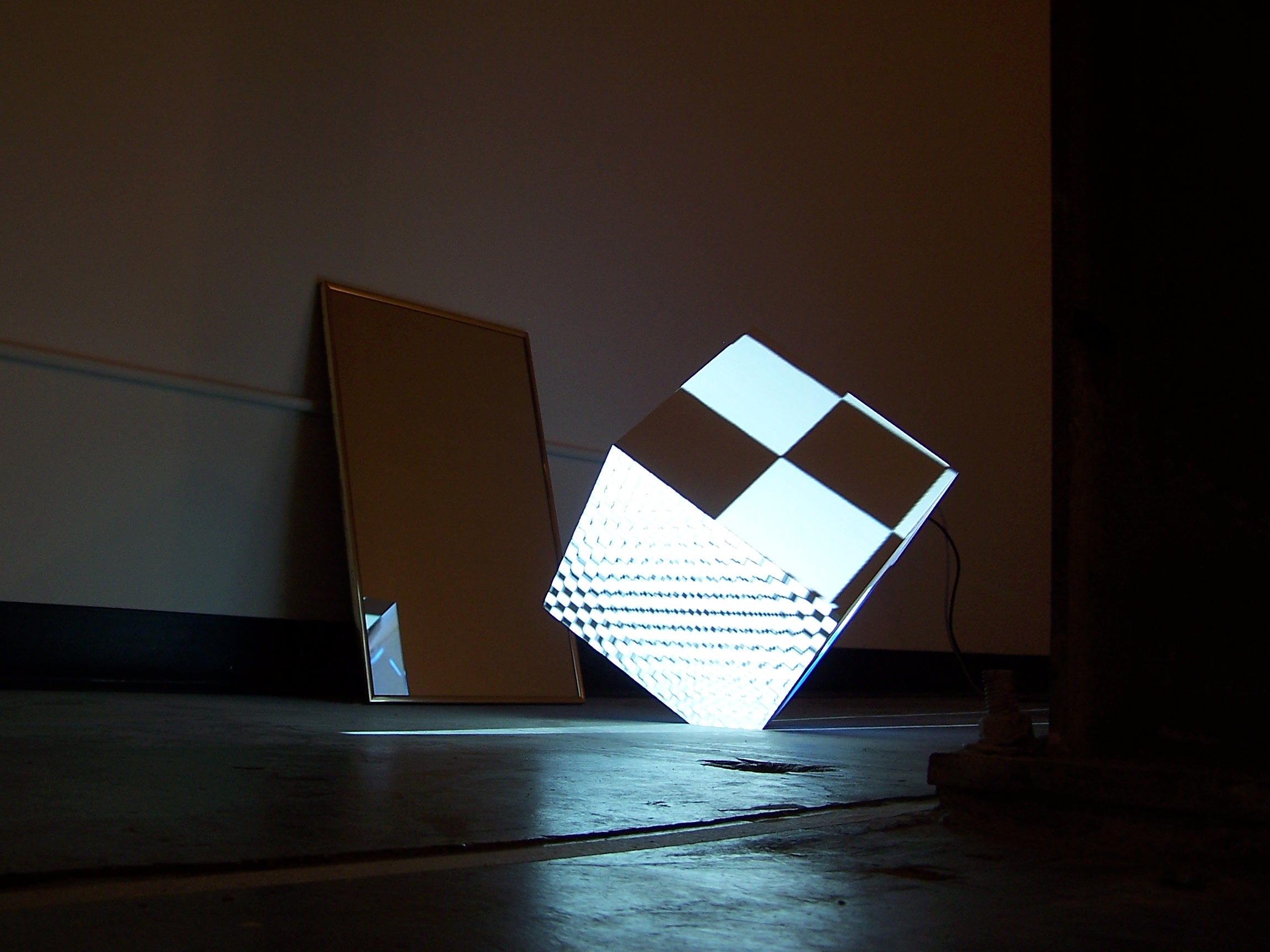

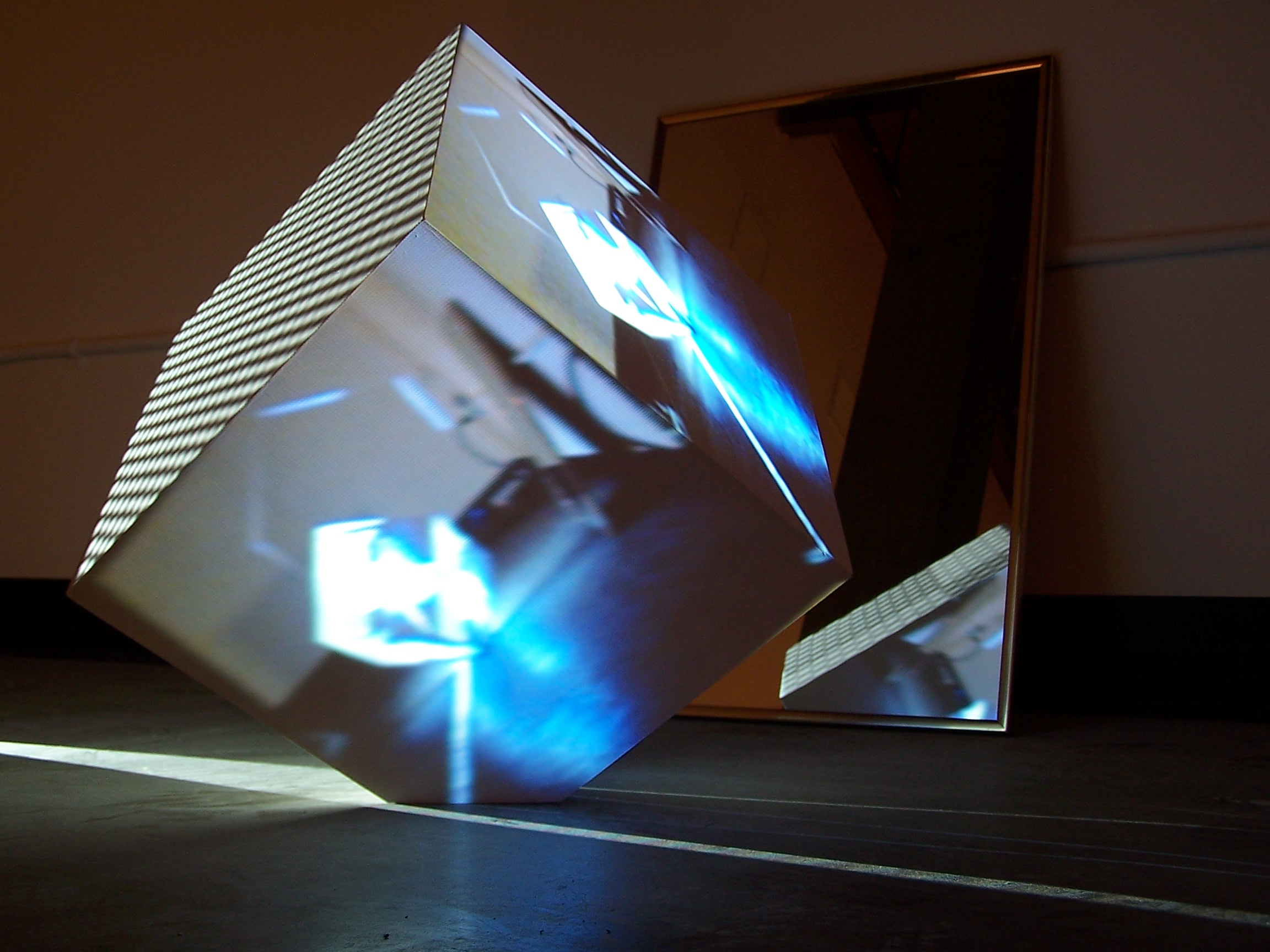

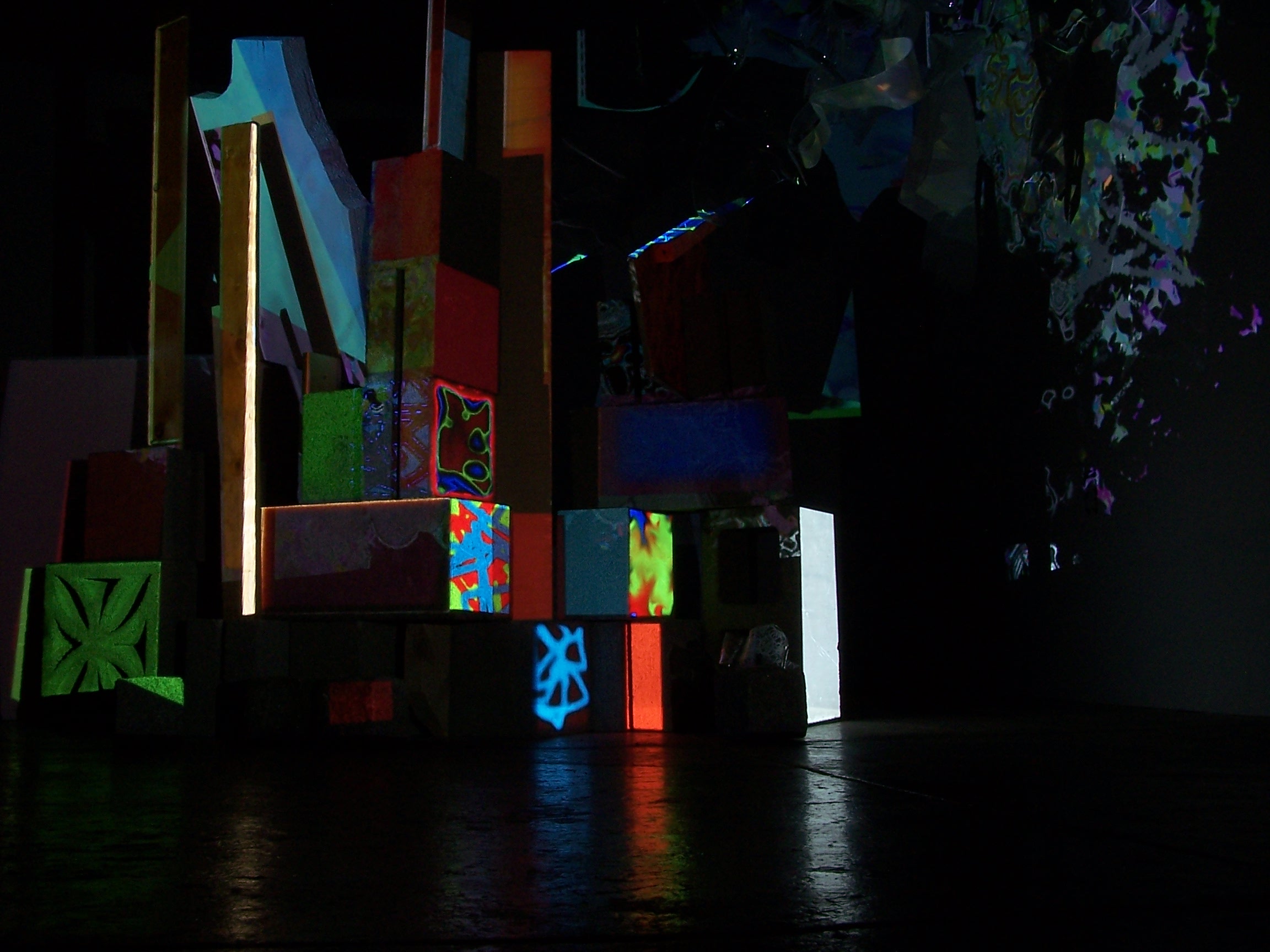

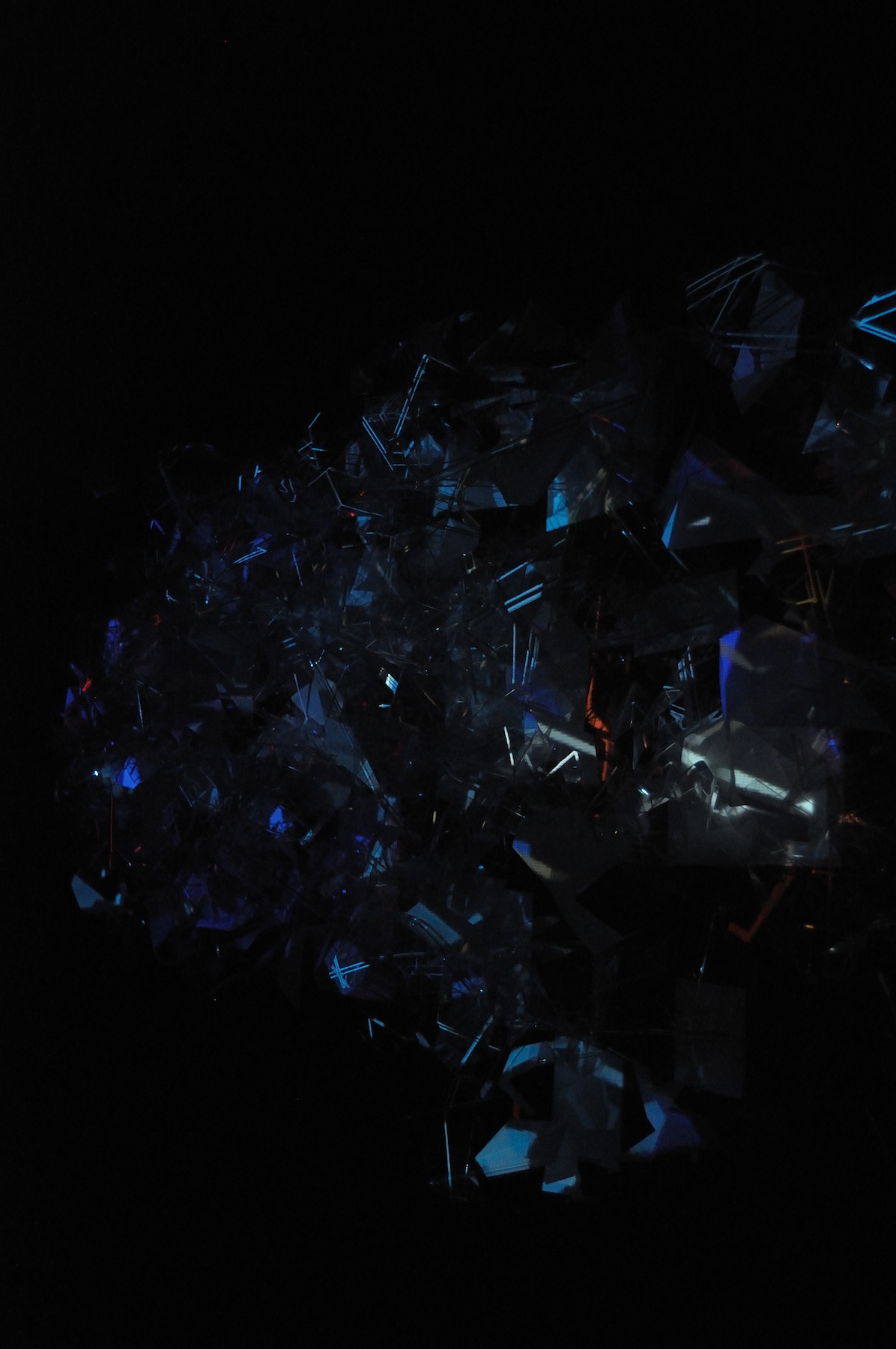

Amazingly it worked. The result was that once the performer was

being tracked by the Kinect almost any sudden series of movements

created a cacophony of enveloping media. The piece was performed

once, recorded as a four channel video, and is now, as of this

writing, playing back daily in the Museum gallery where it was

recorded. The video recordings were captured directly from the

feed going to the projectors in the gallery, providing a

time-delayed semblance of the once live performance, sans

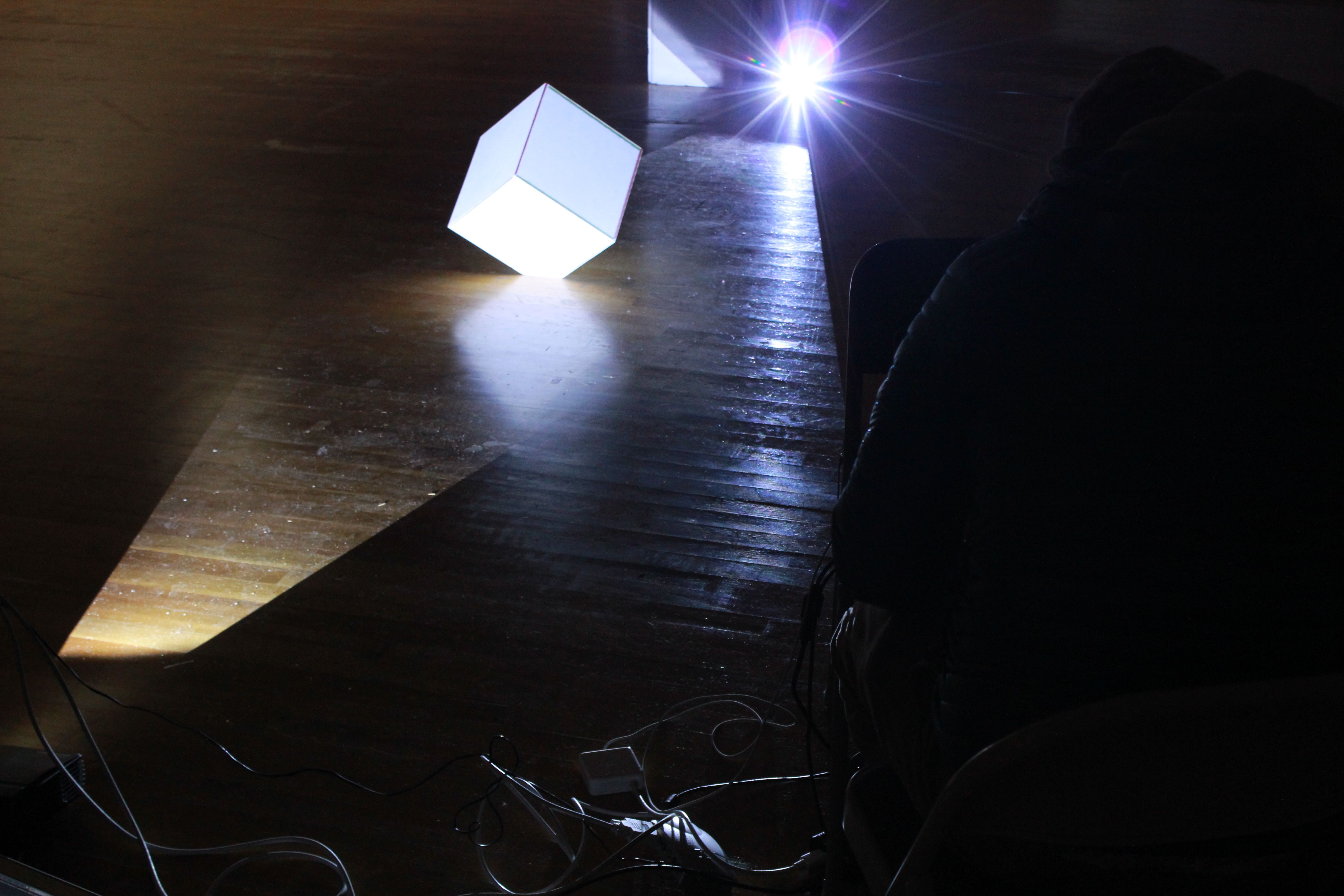

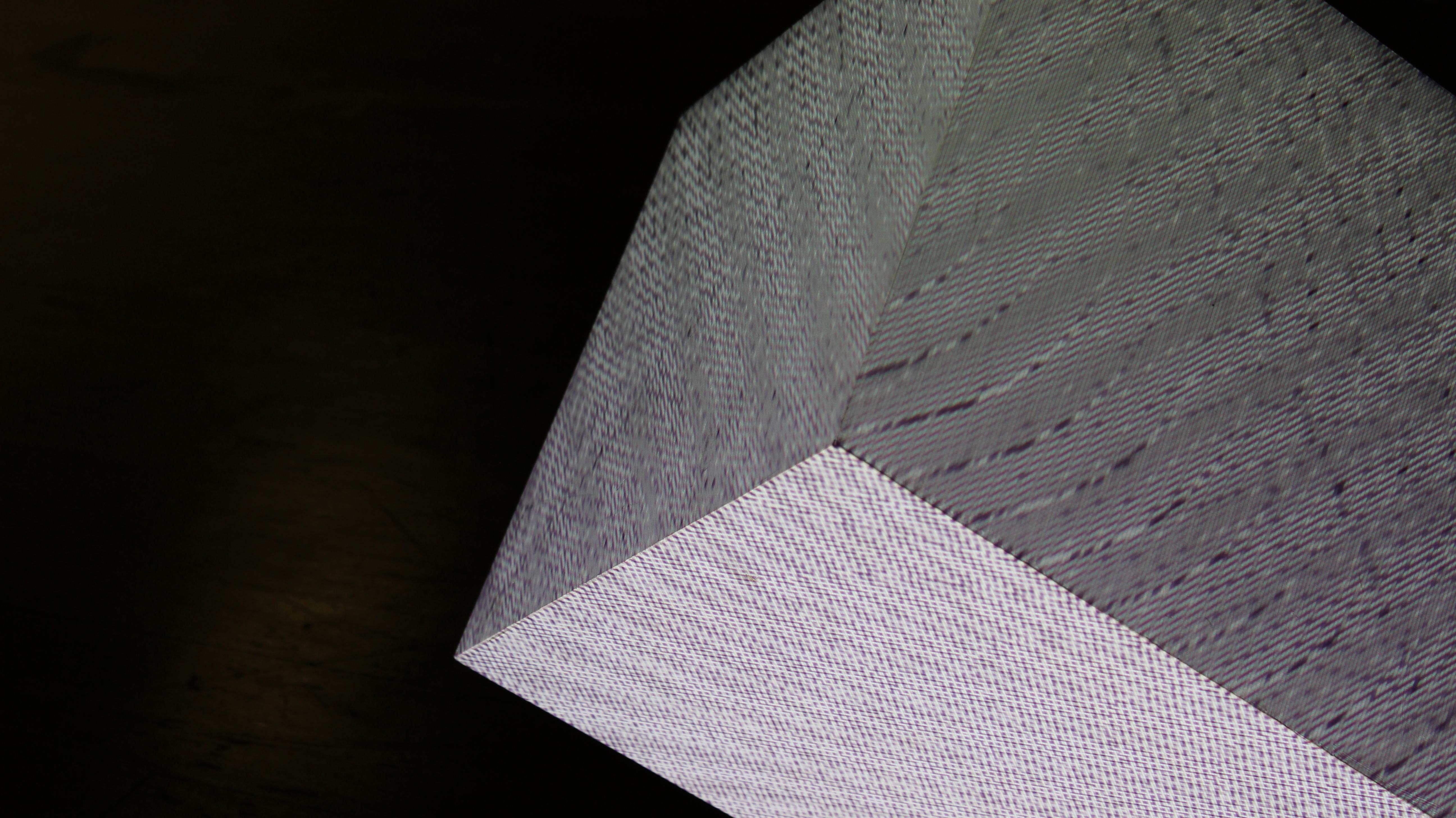

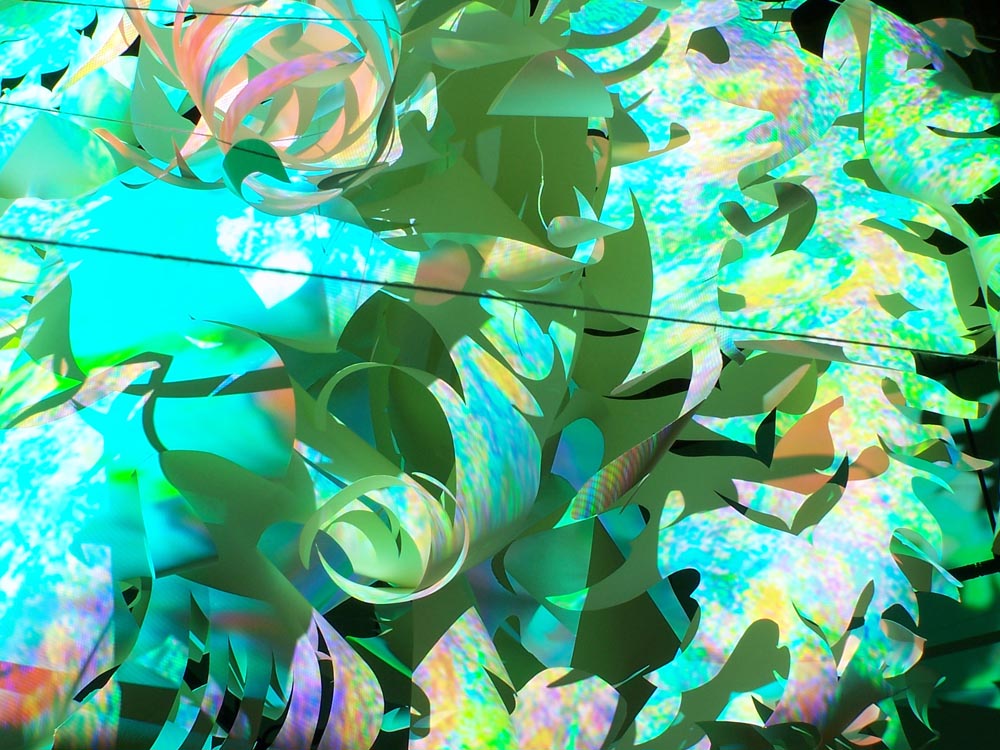

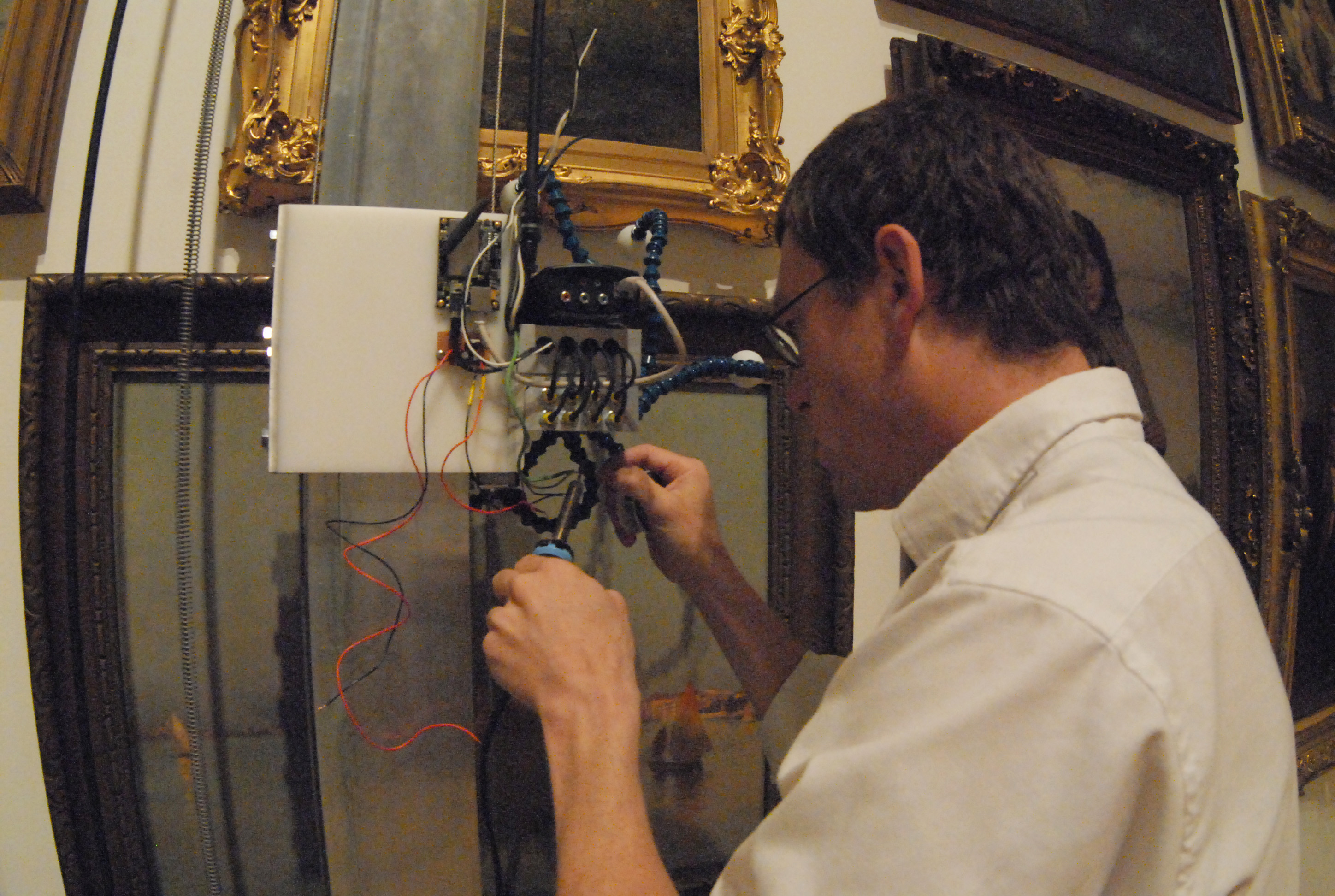

performer. Technicalities The software was written in the

Processing programming language using a variety of third-party

libraries for that coding environment. Several networked computers

ran the software which communicated via OSC to pass control data

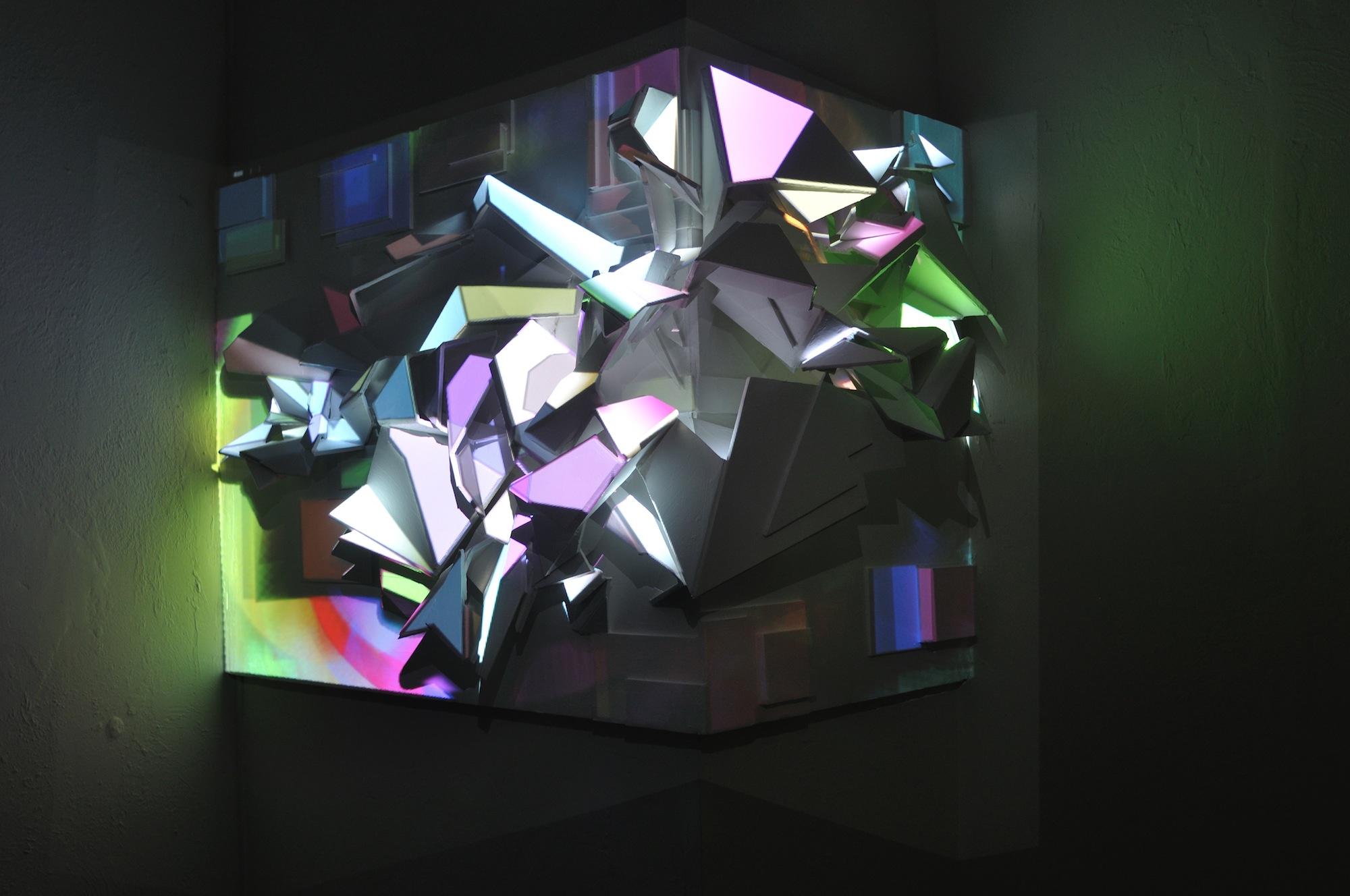

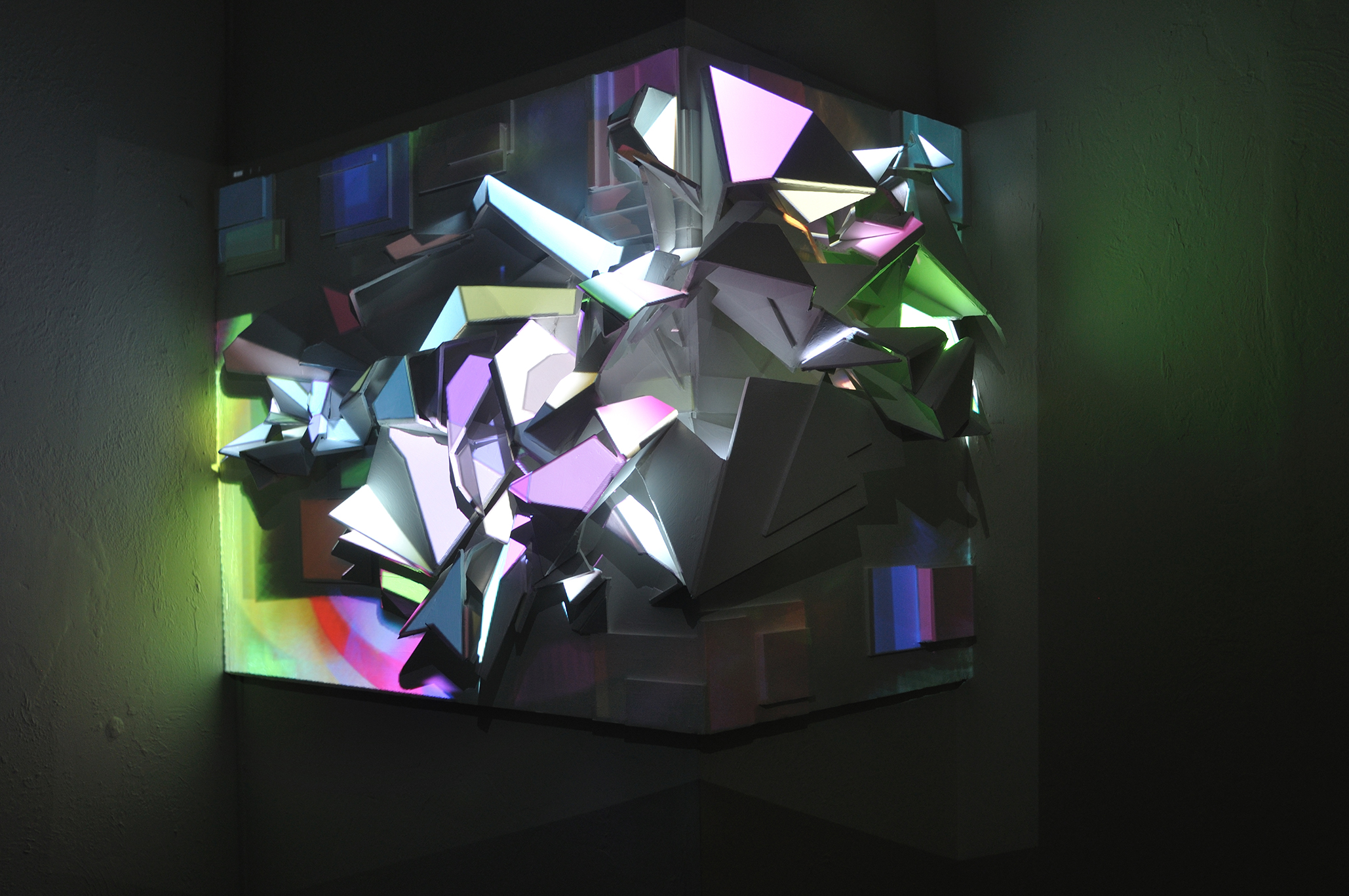

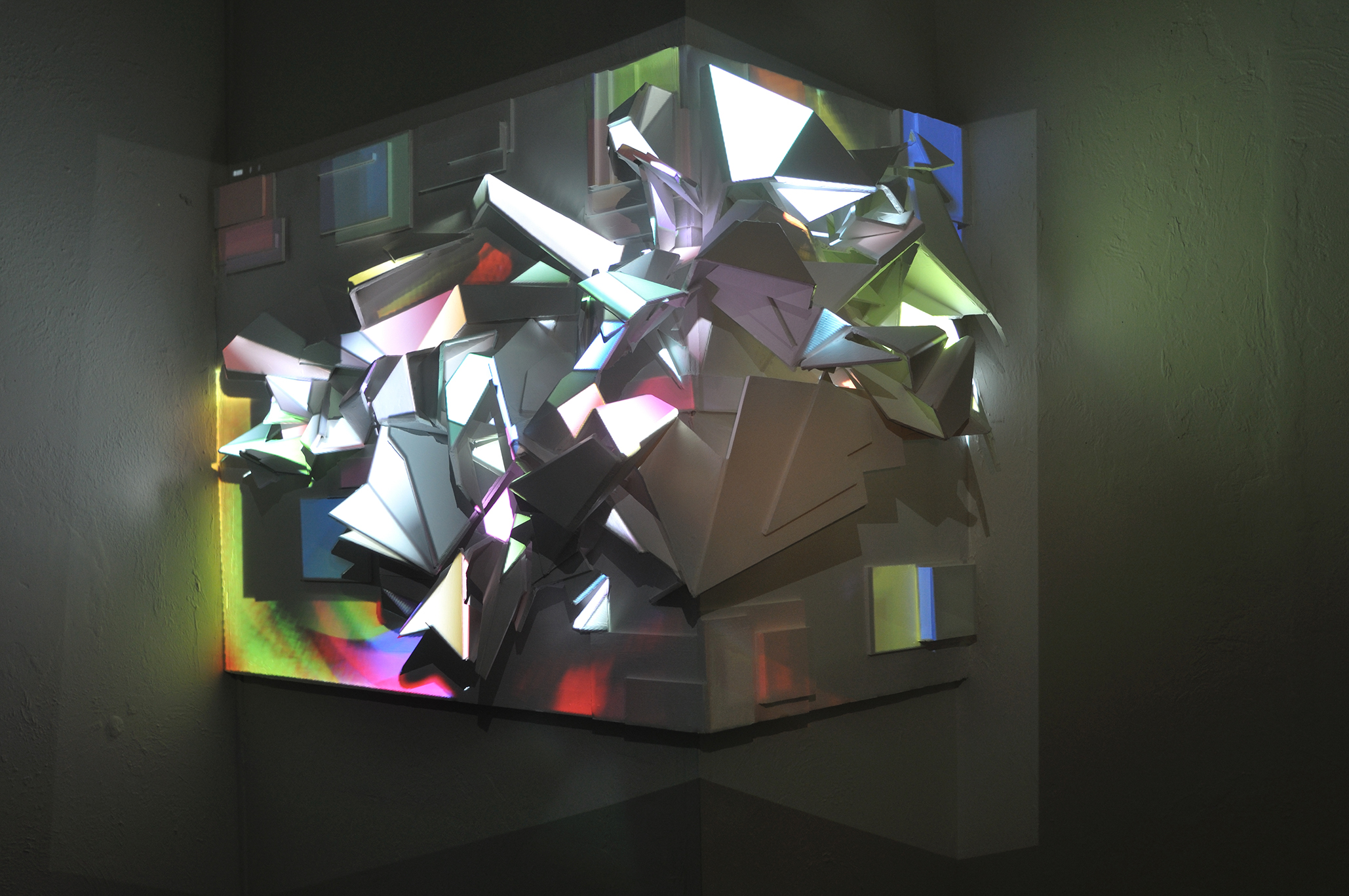

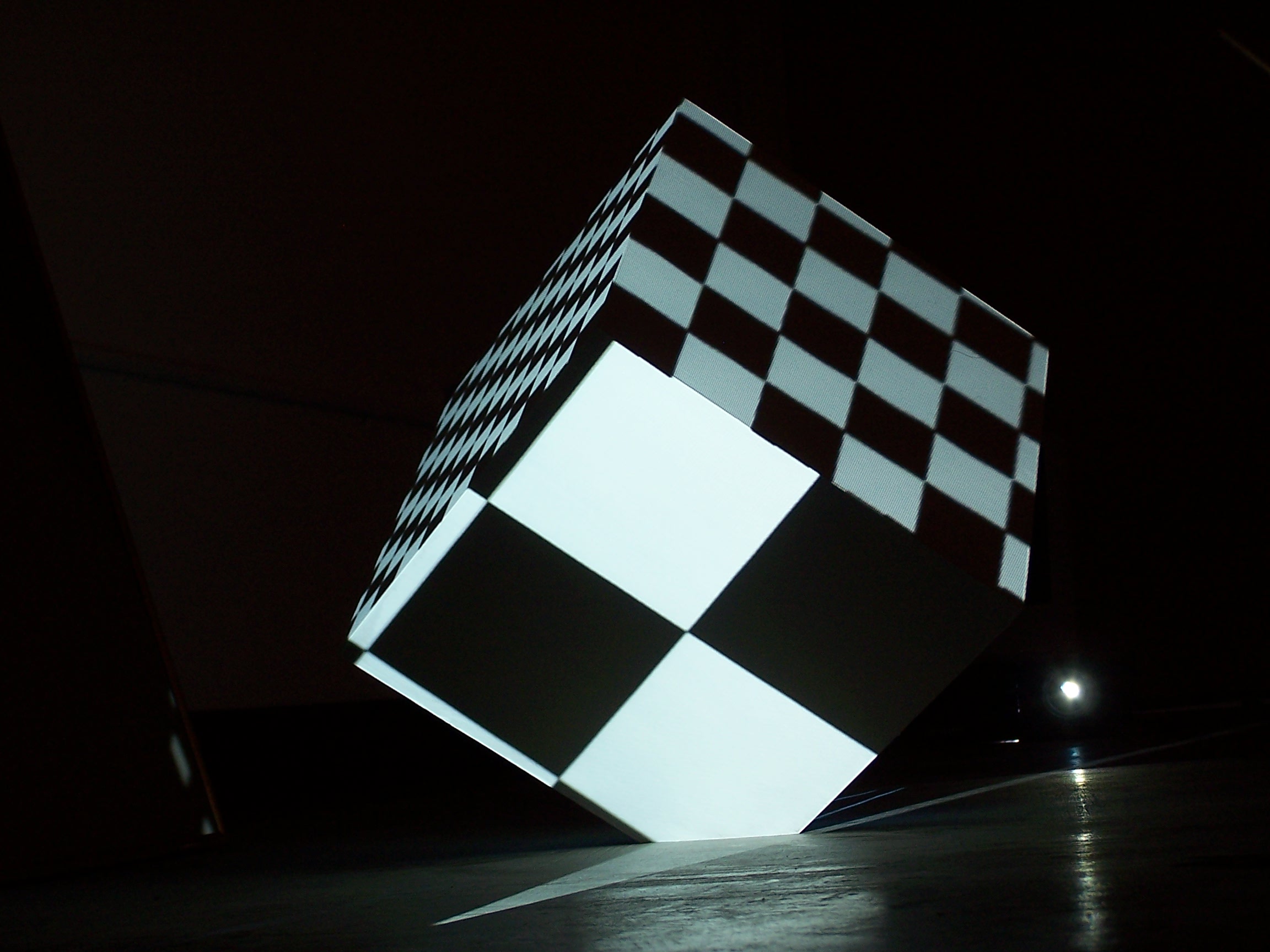

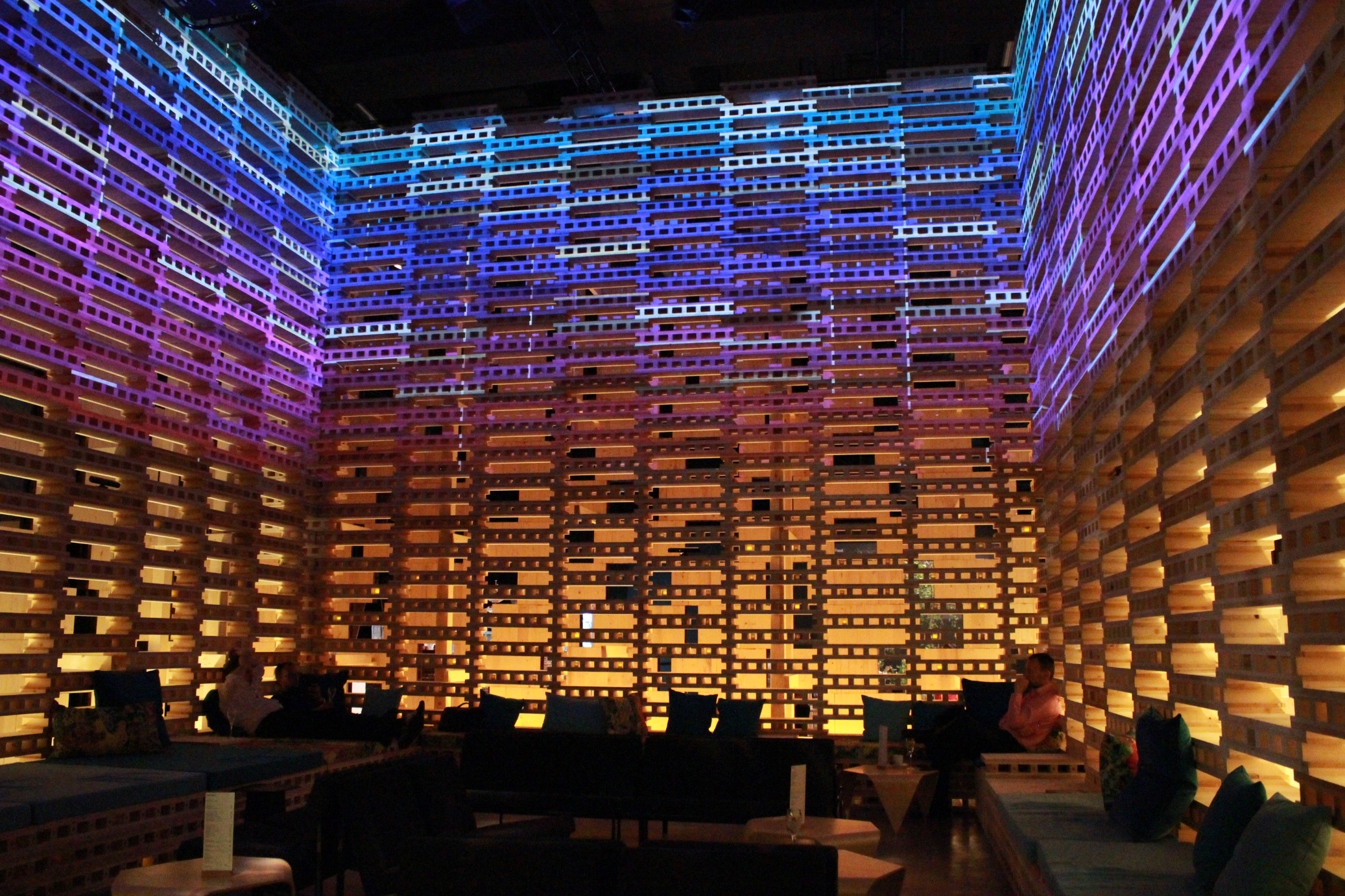

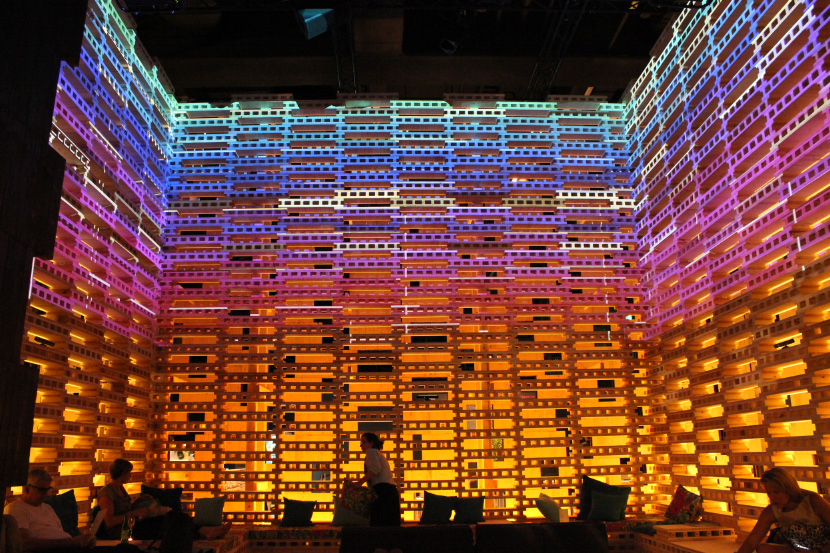

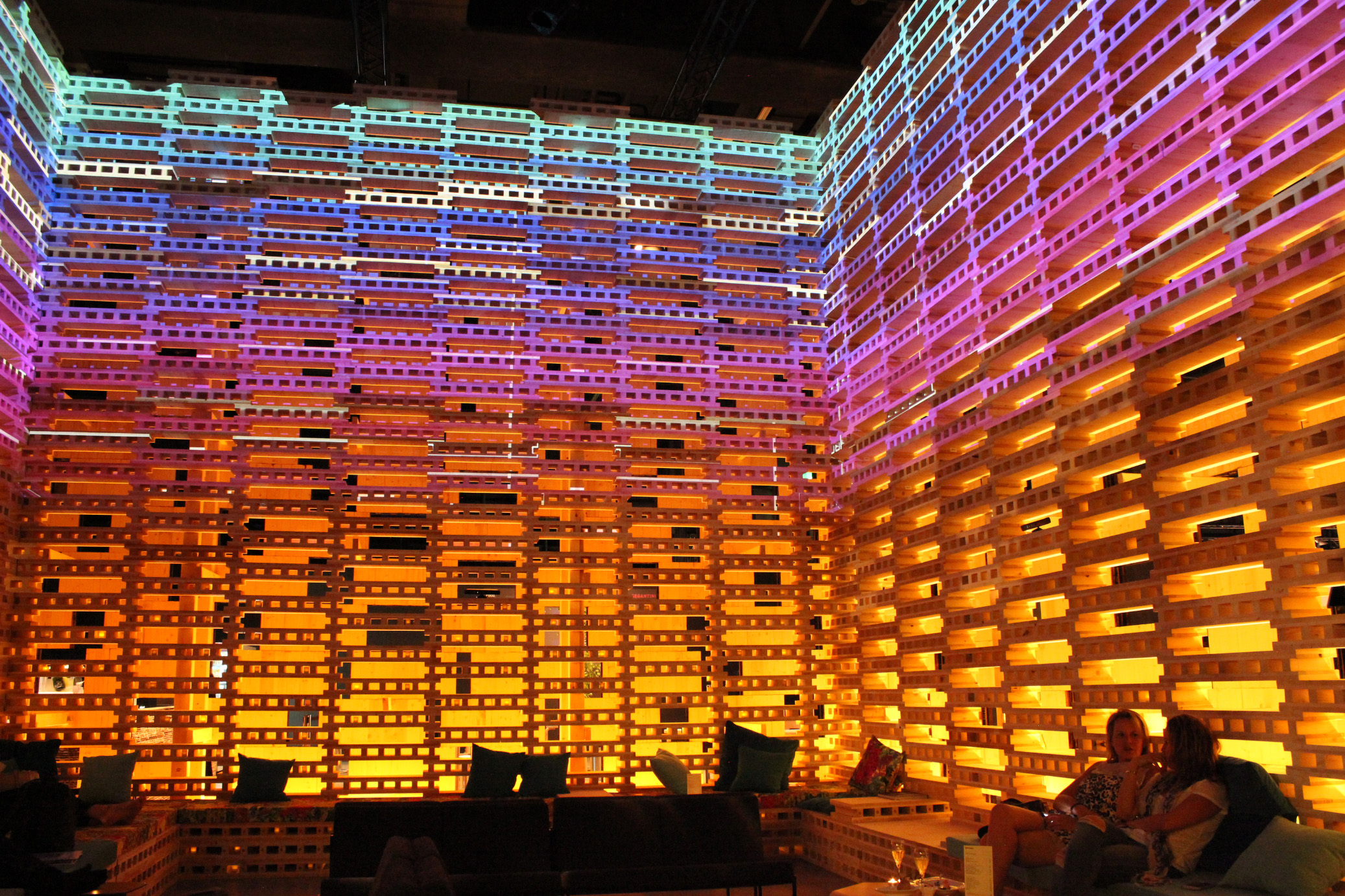

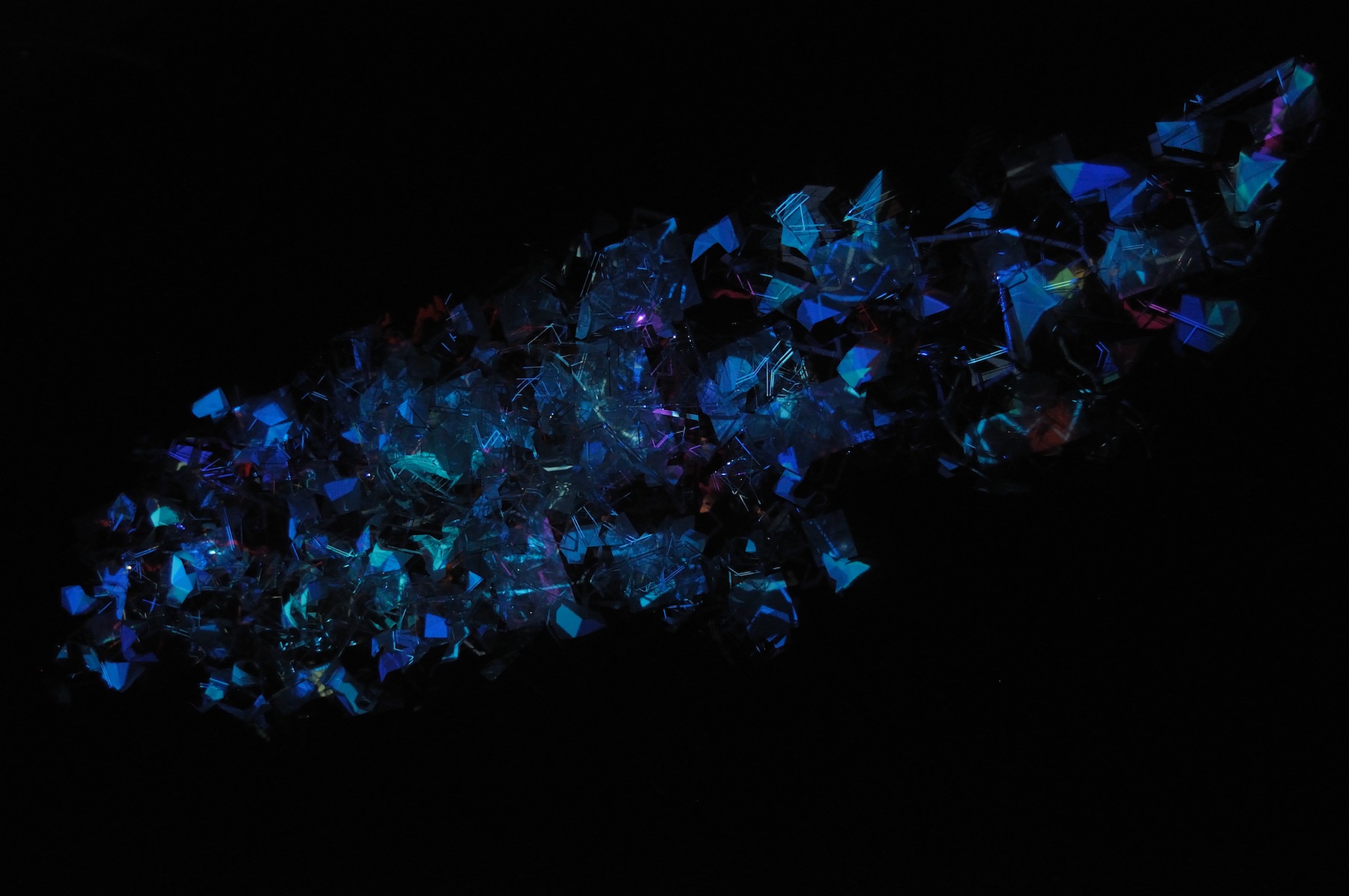

between them. The MARCO galleries are quite large, this provided a

large canvas of white gallery walls to project on. In the end we

used five projectors, each fed images by a Mac-Minis networked

together via ethernet. The entire hardware system was mounted to a

large circular lighting truss that the museum had on hand. For the

performance a stage sound system was utilized for audio playback

into the gallery. The first application we built,

TextFluidCongeal, was a fluid dynamics particle system where each

particle was an individual letter in a phrase. A Kinect, looking

down from above and right beside the video projector, tracked

movement of feet walking through the fluid. We used the very

stable NecTouch by French software artist Benjamin Kuperberg for

control data here. NecTouch outputs TUIO, a multi-touch focused

specification of OSC, sending the x,y locations of each foot it

detects (normally it would be fingers, but we tweaked some

settings). We also used Memo Akten’s overly-abused fluid dynamics

framework MSAFluid, which, incidentally, receives TUIO quite

readily in one of it’s examples for Processing. Some hacking of

these two things together, along with several dozen lines of

custom code (Processing: the duct-tape of programming languages),

allowed us to read phrases we notated in an XML file, and set

trigger points that would “congeal” an individual phrase when it’s

trigger area was stepped on. A simultaneous phrase would be spoken

on the overhead speakers when this occurred by sending an OSC

message to another computer on the network to trigger an audio

only version of the software described below. The other

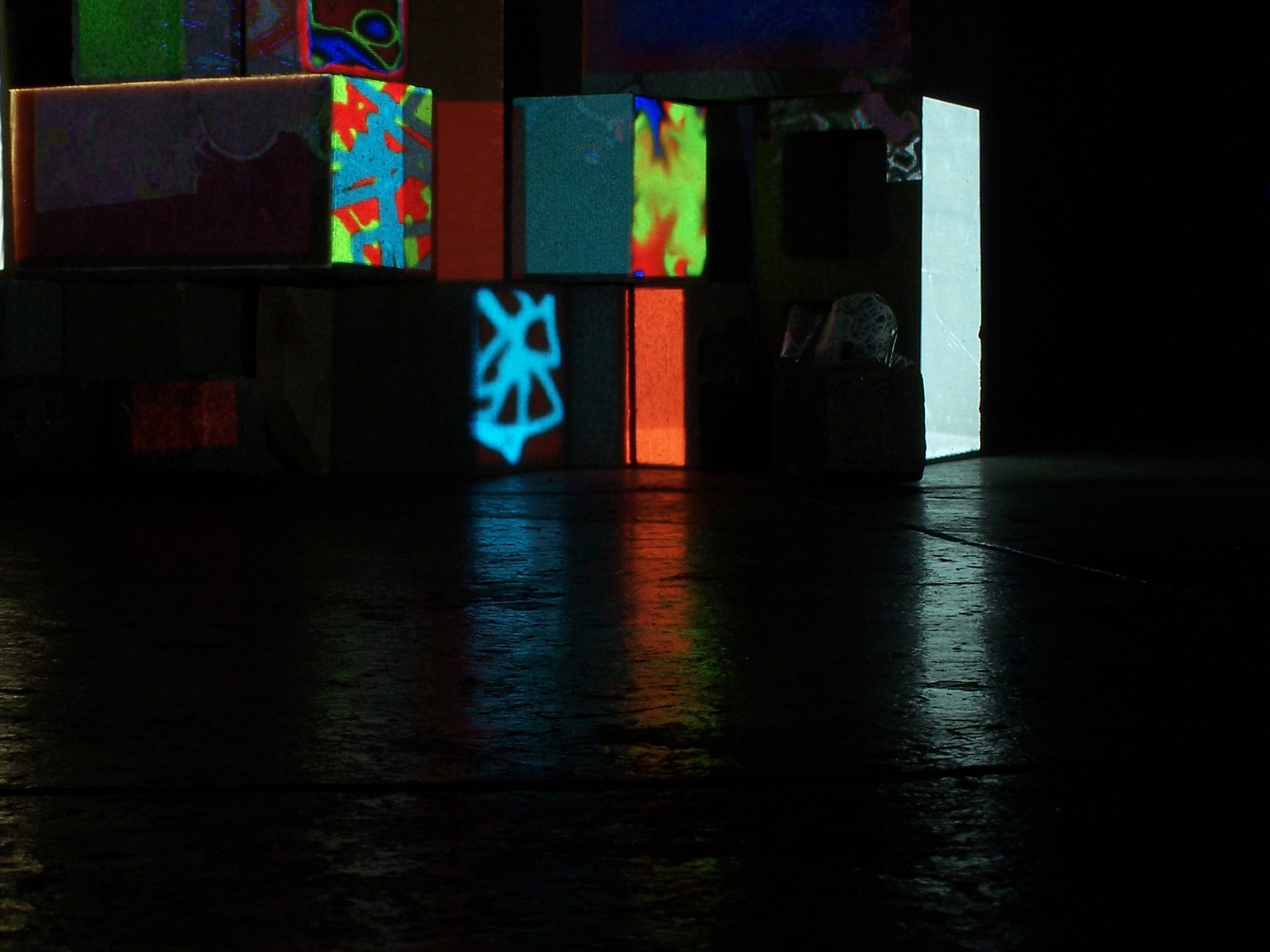

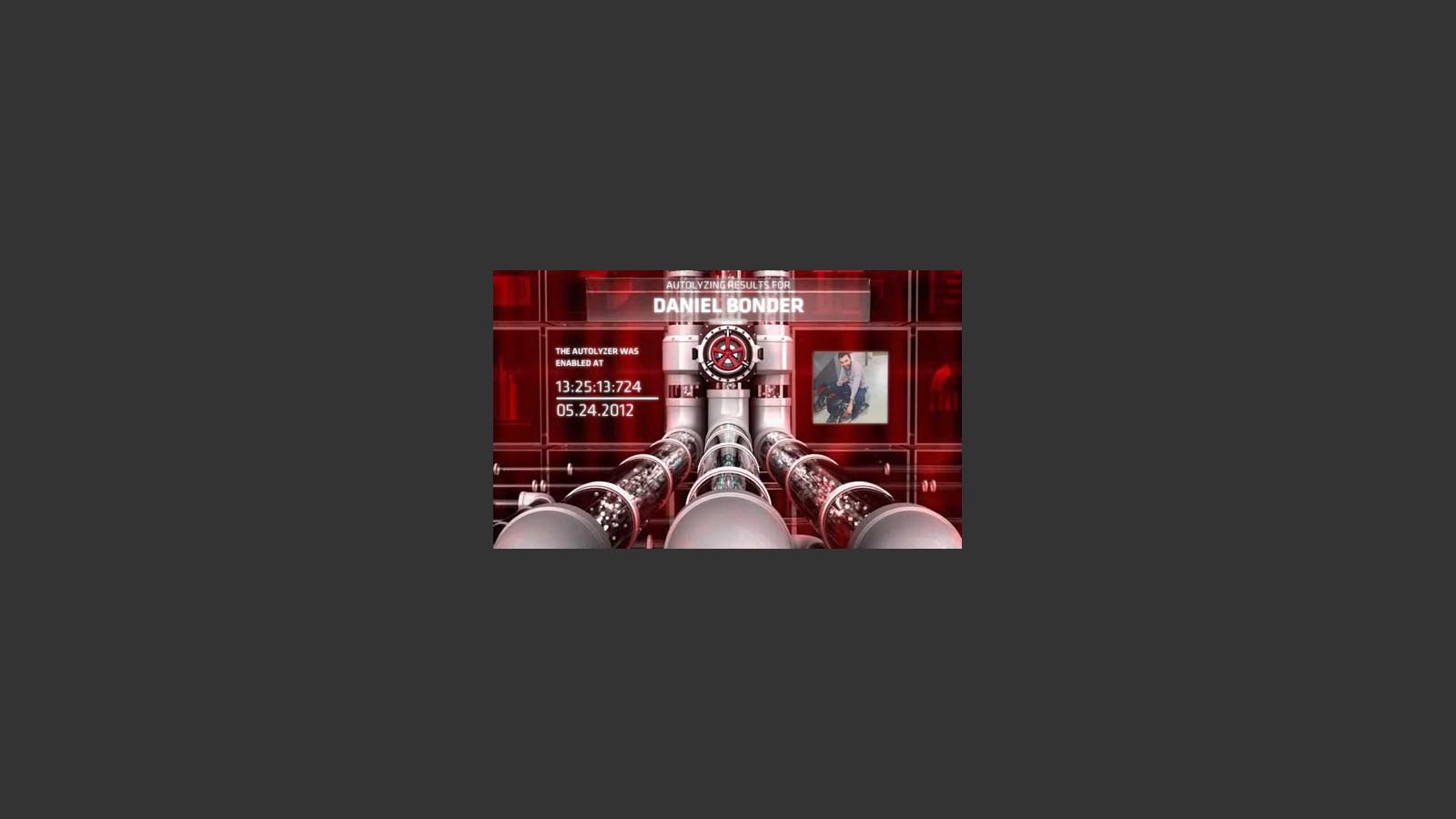

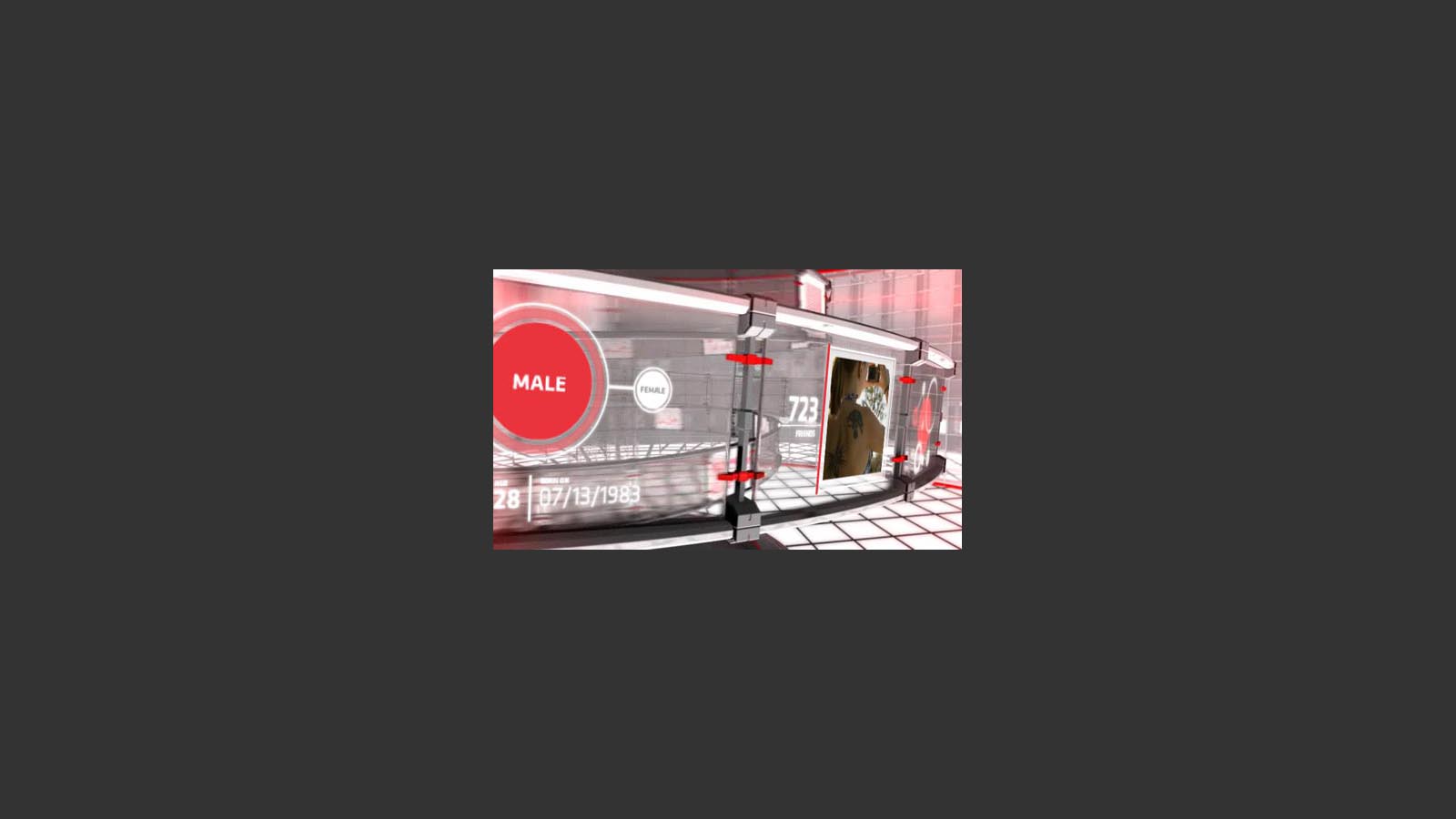

application, which I ultimately dubbed MegaFlip, tracked skeleton

data and could activate triggers when two or three joints of the

users skeleton were in certain range-distance of each other. For

example, while the app tracked your movements, touching your head

with your right hand could trigger a video clip displayed on a

video projector, while touching your right foot with your left

hand might trigger an audio clip played back on overhead speakers.

Additionally the software could send and receive OSC messages to

the other computers so that a gesture tracked by any one computer

could trigger play back on any other computer. Each computer’s

instance of the application was configured with an XML file. Each

node in the XML defined a relationship between joints (i.e. right

foot to left hand) and the distance those joints had to be within

to trigger a function, also defined in the XML element, along with

a reference to which audio or video file to play back and on which

computer (by IP address on the local network). Live cameras were

also hooked into the software and could be turned on and off via

various gestures. MegaFlip was constructed with multiple

Processing libraries as well. SimpleOpenNI was used for

interfacing with the Kinect and skeleton tracking. Andres

Colubri‘s library GSVideo was used for audio and video playback.

Andreas Schlegel‘s oscP5 library was used for OSC communication.

Benjamin Kuperberg’s MapiNect was also a great inspiration for our

XML mapping schema, as we essentially created a simplified version

of this within the software. During the performance five computers

ran MegaFlip simultaneously. The original plan was to have each

connected to it’s own Kinect and projector, having them all track

the performers skeleton simultaneously and trigger various media.

We discovered though that the Kinects interfered with each other

when their field of view overlapped too much, and given the

circular arrangement of the gallery lighting grid we had to

abandon having multiple skeleton tracking computers (though I did

manage to get all 5 Kinects track me for half an hour without

loosing track during some tests… ). Interestingly the Kinect

facing the floor for the text fluid software described above did

not interfere with the one Kinect we used for skeleton tracking.

The system was truly put through it’s paces during the actual

performance, with dozens of gesture mappings sending signals to

play media around the gallery space via the network. The system

ran without a hitch during the entire performance, suggesting that

it could be stable enough for longer or more complex

configurations. It is generic enough to be used for a near

infinite variety of unique performances and interactive

installations due to it’s ultra configurable nature.

When first getting hired on at Süperfad I created a set of

custom software for media artist Gary Hill’s 2012 work Writing

Corpora.

The new work was created for the international group exhibition

Active Presence: Action, Object and Public, which debuted at the

Museo MARCO in Vigo, Galacia (Spain) during February of this year.

The exhibition, curated by Sergio Edelsztein and Kathleen Forde,

focused on artists whose practice contains both performance and

installation elements. The overall gist of Writing Corpora,

without paraphrasing the artist too much, is physical gestures

triggering text, video and audio relating to idiomatic phrases

that refer to the human body (i.e. “put your foot in your mouth”),

in both English and, in this version, Galician (the native tongue

in Vigo). The piece is a continuation of the artist’s conceptual

work focused on the convergence of body and language utilizing our

current era’s ever evolving new technologies for self-expression.

For this project Süperfad provided technical support and code

development, creating a software framework that allowed the artist

to play back media elements throughout the gallery space with no

physical controller other than the performers movements. The

result is a full-featured toolkit that can trigger any audio

and/or video media with practically any body pose or gesture.

Overview We created two systems of custom software for Writing

Corpora. The first was a fluid-dynamics “touch-floor”, an alphabet

soup of letters that congeal into legible phrases and words when

certain regions of the floor projection are stepped on, which is

also an interactive element that remains for current and future

gallery attendees. When a phrase formed in the text fluid it also

simultaneously played an audio clip on overhead speakers of the

same idiom being spoken in Galician if the text was in English and

vice-versa. The other software, used only during the performance

by the artist, tracked skeleton data from a Kinect depth camera

for real-time control of audio/visual elements with physical body

gestures and movements. This tracked the physical distance between

almost every possible combination of skeletal joints (elbow to

head, foot to torso, knee to neck … you name it) and played back

specific audio and/or video clips on one of three projectors in

the room while a forth displayed this tangle of gesture data

overlaid on the user’s tracked skeleton. Process I was contacted

by Gary’s studio assistant Reilly Donovan in late December to

write custom code to fulfill the artist’s concept as they were

hitting barriers with pre-built software. During this time

Süperfad was bringing me in as their lead creative developer, and

this project came with me. Süperfad founder and director Will Hyde

turned out to be a fan of Mr. Hill and not only agreed to send me

to Spain to help install the work but also sent along art director

Loren Judah to assist and document the process. Primarily we were

all excited about a collaboration in the realm of “pure art”

guided by Gary Hill’s vision and decades long experience of

creating conceptual works with new electronic mediums and

combining this with Süperfad’s digital tech skills. Reilly had

been experimenting with the Kinect platform, and some of the open

source performance software that has been developed for it, which

lead to their request for custom software to achieve certain

ideas. As we worked together developing the software these

objectives changed, sometimes due to a limitation we found in the

hardware, but also when a new possibility was discovered as we

began to understand the toolkit we were working with more clearly.

We continued developing the system for the MARCO performance right

up until the morning before the exhibition opening, and spent that

afternoon creating video clips (shot and edited by Superfad art

director Loren Judah, on the spot). Hours before showtime we

frantically entered references to the media files in xml notation,

along with gesture definitions, to configure the real-time

applications we’d spent the previous month and half creating.

Amazingly it worked. The result was that once the performer was

being tracked by the Kinect almost any sudden series of movements

created a cacophony of enveloping media. The piece was performed

once, recorded as a four channel video, and is now, as of this

writing, playing back daily in the Museum gallery where it was

recorded. The video recordings were captured directly from the

feed going to the projectors in the gallery, providing a

time-delayed semblance of the once live performance, sans

performer. Technicalities The software was written in the

Processing programming language using a variety of third-party

libraries for that coding environment. Several networked computers

ran the software which communicated via OSC to pass control data

between them. The MARCO galleries are quite large, this provided a

large canvas of white gallery walls to project on. In the end we

used five projectors, each fed images by a Mac-Minis networked

together via ethernet. The entire hardware system was mounted to a

large circular lighting truss that the museum had on hand. For the

performance a stage sound system was utilized for audio playback

into the gallery. The first application we built,

TextFluidCongeal, was a fluid dynamics particle system where each

particle was an individual letter in a phrase. A Kinect, looking

down from above and right beside the video projector, tracked

movement of feet walking through the fluid. We used the very

stable NecTouch by French software artist Benjamin Kuperberg for

control data here. NecTouch outputs TUIO, a multi-touch focused

specification of OSC, sending the x,y locations of each foot it

detects (normally it would be fingers, but we tweaked some

settings). We also used Memo Akten’s overly-abused fluid dynamics

framework MSAFluid, which, incidentally, receives TUIO quite

readily in one of it’s examples for Processing. Some hacking of

these two things together, along with several dozen lines of

custom code (Processing: the duct-tape of programming languages),

allowed us to read phrases we notated in an XML file, and set

trigger points that would “congeal” an individual phrase when it’s

trigger area was stepped on. A simultaneous phrase would be spoken

on the overhead speakers when this occurred by sending an OSC

message to another computer on the network to trigger an audio

only version of the software described below. The other

application, which I ultimately dubbed MegaFlip, tracked skeleton

data and could activate triggers when two or three joints of the

users skeleton were in certain range-distance of each other. For

example, while the app tracked your movements, touching your head

with your right hand could trigger a video clip displayed on a

video projector, while touching your right foot with your left

hand might trigger an audio clip played back on overhead speakers.

Additionally the software could send and receive OSC messages to

the other computers so that a gesture tracked by any one computer

could trigger play back on any other computer. Each computer’s

instance of the application was configured with an XML file. Each

node in the XML defined a relationship between joints (i.e. right

foot to left hand) and the distance those joints had to be within

to trigger a function, also defined in the XML element, along with

a reference to which audio or video file to play back and on which

computer (by IP address on the local network). Live cameras were

also hooked into the software and could be turned on and off via

various gestures. MegaFlip was constructed with multiple

Processing libraries as well. SimpleOpenNI was used for

interfacing with the Kinect and skeleton tracking. Andres

Colubri‘s library GSVideo was used for audio and video playback.

Andreas Schlegel‘s oscP5 library was used for OSC communication.

Benjamin Kuperberg’s MapiNect was also a great inspiration for our

XML mapping schema, as we essentially created a simplified version

of this within the software. During the performance five computers

ran MegaFlip simultaneously. The original plan was to have each

connected to it’s own Kinect and projector, having them all track

the performers skeleton simultaneously and trigger various media.

We discovered though that the Kinects interfered with each other

when their field of view overlapped too much, and given the

circular arrangement of the gallery lighting grid we had to

abandon having multiple skeleton tracking computers (though I did

manage to get all 5 Kinects track me for half an hour without

loosing track during some tests… ). Interestingly the Kinect

facing the floor for the text fluid software described above did

not interfere with the one Kinect we used for skeleton tracking.

The system was truly put through it’s paces during the actual

performance, with dozens of gesture mappings sending signals to

play media around the gallery space via the network. The system

ran without a hitch during the entire performance, suggesting that

it could be stable enough for longer or more complex

configurations. It is generic enough to be used for a near

infinite variety of unique performances and interactive

installations due to it’s ultra configurable nature.